Exploring Technology: Innovations and tech advancements.

Introduction and Outline

Technology is not a single wave; it is a tide with many currents. Some are visible in headlines—artificial intelligence, faster networks, new chips—while others are structural changes in economics, regulation, and design that determine which ideas actually stick. This article starts with a clear map, then walks each path with practical comparisons, grounded numbers, and examples you can adapt. The aim is simple: help you recognize signal from noise and make decisions that age well.

– What runs where: choosing between cloud, edge, and on-device computing.

– AI as a capability: from prototyping to production, responsibly and efficiently.

– Hardware and sustainability: materials, energy, and lifecycle strategy.

– Connectivity: fiber, terrestrial wireless, and space-based links, working together.

– A pragmatic roadmap: skills, metrics, risk controls, and iterative delivery.

Cloud, Edge, and On-Device: Picking the Right Place to Compute

Where computation happens has become as important as what computation does. Centralized clouds excel at elasticity and global reach; edge nodes shine when milliseconds matter; on-device processing offers privacy and independence. Choosing among them is not an ideology—it’s a workload design question that balances latency, bandwidth, security, resilience, and cost.

Latency draws the first line. Interactive experiences begin to feel “instant” below roughly 100 milliseconds; time-sensitive control systems often need single-digit milliseconds. Each network hop adds overhead, and physics sets a floor: signals traverse fiber at about two-thirds the speed of light. Moving decision logic from a distant region to a nearby metro edge can trim tens of milliseconds; keeping it directly on-device may shave off tens more. For applications like augmented interfaces, industrial robots, or safety monitoring, those savings translate into smoother motion and higher throughput.

Bandwidth and egress fees draw the second line. Shipping raw sensor streams or high-resolution video to a distant data center is expensive and slow. Preprocessing at the edge—filtering, compressing, or extracting features—can cut upstream traffic by orders of magnitude. A practical pattern is “progressive refinement”: devices perform quick heuristics, edge nodes run heavier models, and the cloud aggregates history for training, audit, and optimization. This tiered flow reduces costs while preserving long-horizon intelligence.

Security and compliance draw a third line. Keeping personal data on-device reduces exposure; processing in a defined jurisdiction can satisfy location mandates; centralizing only derived, anonymized attributes limits risk while keeping value. Pair this with resilient design: if a backhaul link fails, edge nodes should degrade gracefully, buffering data and serving cached decisions.

Implementation details matter. Lightweight containers and portable runtimes enable the same code to run from handhelds to metro servers. Streaming protocols with backpressure prevent overload. Observability must follow the workload: gather local traces, then roll up summaries to a central plane. Decision checklist:

– Latency tolerance (ms) and jitter budget.

– Data gravity: volume, sensitivity, locality rules.

– Cost model: compute vs. transfer vs. storage trade-offs.

– Failure modes: offline behavior, eventual sync, and rollback.

– Operational fit: deployment cadence, monitoring, and patch paths.

AI As Utility: From Prototype to Production, Responsibly

Artificial intelligence has moved from novelty to utility, but its value depends on scale, placement, and guardrails. Large general models deliver broad skills, while compact models tailored to a domain can run efficiently at the edge or on devices. The most effective systems mix them: a small local model for instant responses and privacy, with occasional escalation to a heavier model for complex tasks.

Throughput and cost are the first practical questions. On shared infrastructure, measure tokens or samples processed per second per unit of power and currency. A tuned compact model can deliver stable latency under tight power budgets, making it suitable for field equipment or handhelds. Heavier models excel at synthesis and reasoning but may require batching, raising median latency. A hybrid approach routes requests by difficulty: easy cases stay local; hard cases go to a central service. This keeps user experiences consistent while controlling spend.

Retrieval and grounding stabilize outputs. Instead of relying on a model’s internal associations, attach a retrieval step that fetches current, vetted context from a vector index or rules engine. This reduces hallucination risk and makes updates instantaneous: refresh the corpus, not the model. For reliability, add self-checks such as constraint validators and content filters. Evaluation should be continuous, not a one-time test: track quality metrics per scenario, compare variants via A/B tests, and keep a drift log that ties changes to outcomes.

Responsible practice is non-negotiable. Curate data to avoid encoding bias, and document assumptions, limitations, and intended uses. Keep a human-in-the-loop for high-impact decisions, logging rationale and overrides. Maintain a trace from inputs to outputs for audit. Encrypt data in motion and at rest, minimize retention, and segregate sensitive contexts by role. Consider energy per inference: even small gains in efficiency compound at scale. Practical playbook:

– Start with a narrow, valuable task; expand after steady metrics.

– Prefer smaller, well-tuned models where possible; escalate selectively.

– Ground outputs with fresh, verifiable context.

– Monitor quality, cost, latency, and safety as first-class KPIs.

– Build rollback plans: version models, prompts, and retrieval corpora.

Materials, Energy, and Sustainable Hardware

Progress in computing has been as much about materials and packaging as algorithms. Specialized accelerators and chiplet designs channel workloads efficiently, while advanced stacking shortens signal paths and raises density. These gains, however, coexist with a growing concern: the total footprint of the hardware lifecycle, from extraction and fabrication to use and end-of-life.

Embodied emissions are often front-loaded. A single compact laptop can represent a few hundred kilograms of carbon dioxide equivalent before it ever powers on; a handheld device can approach tens of kilograms. Multiply by fleets, and the upfront impact rivals years of operational energy. Extending lifespans, enabling component-level upgrades, and prioritizing repairability can therefore outweigh small efficiency gains from frequent refreshes.

Operational efficiency still matters. Facilities describe effectiveness via simple ratios: a power usage figure near 1.2 signals careful engineering, while 1.5 or higher suggests room to improve. Cooling is a major lever; direct liquid and immersion approaches remove heat more effectively than air alone, reducing fan power and allowing higher density. Water stewardship belongs in the same conversation: shift heavy jobs to cooler hours or regions with abundant, responsibly managed resources, and publish transparent consumption metrics.

Design for circularity closes the loop. Modular parts keep devices serviceable; standardized fasteners and clear documentation enable safe disassembly; material passports help recyclers recover high-value metals and rare elements. Secure data erasure protocols make reuse viable without risk. For fleet managers, the hierarchy is straightforward:

– Avoid: remove idle capacity and redundant systems.

– Reduce: consolidate workloads and right-size instances.

– Reuse: redeploy older gear to less demanding roles.

– Recycle: partner with certified handlers for material recovery.

Finally, measure what matters. Track embodied and operational footprints side by side, normalized by useful work (for example, inferences or transactions per kilowatt-hour). Tie procurement to durability and repair metrics, not just sticker price. When efficiency, longevity, and circular flows meet, performance gains and sustainability stop competing and start compounding.

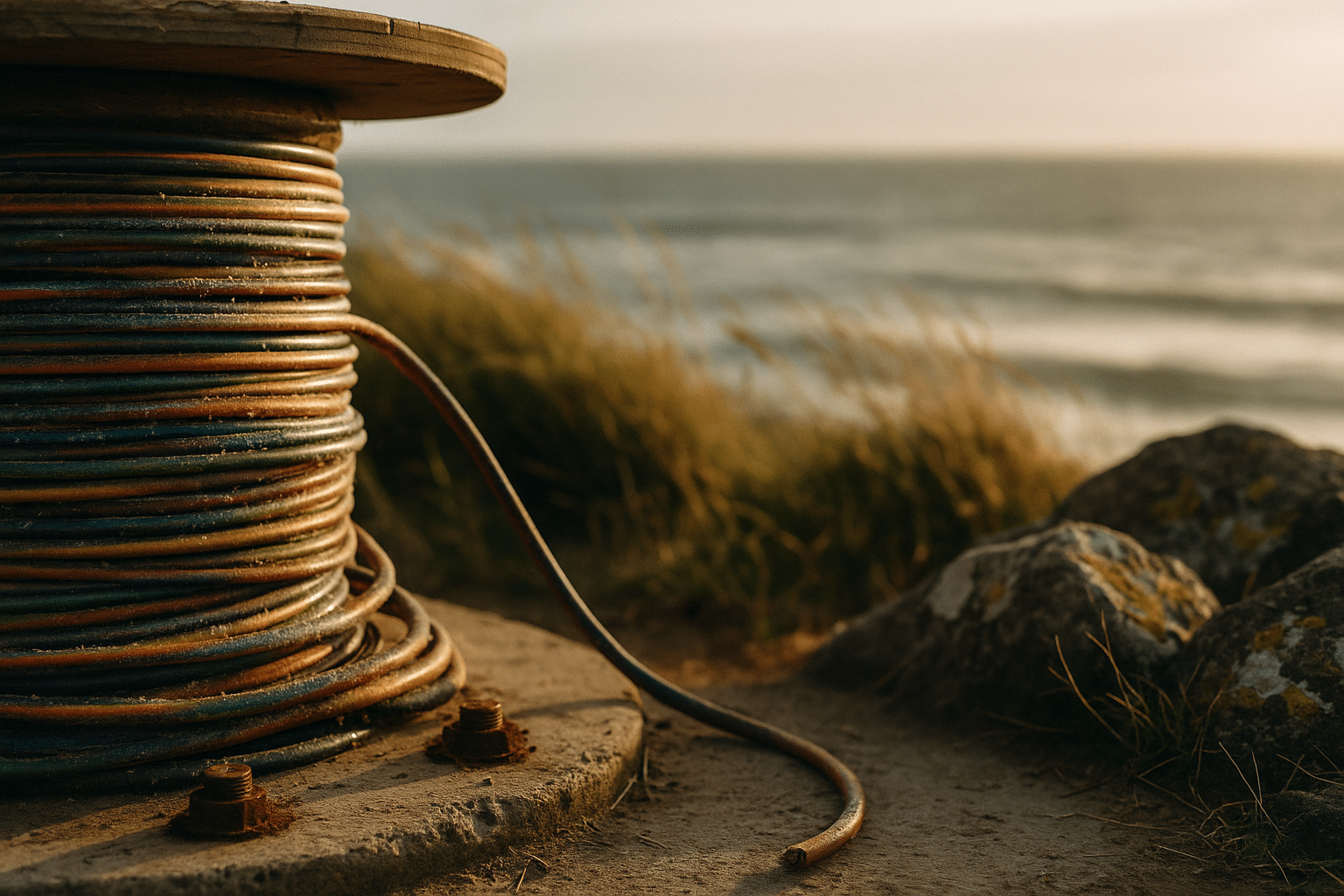

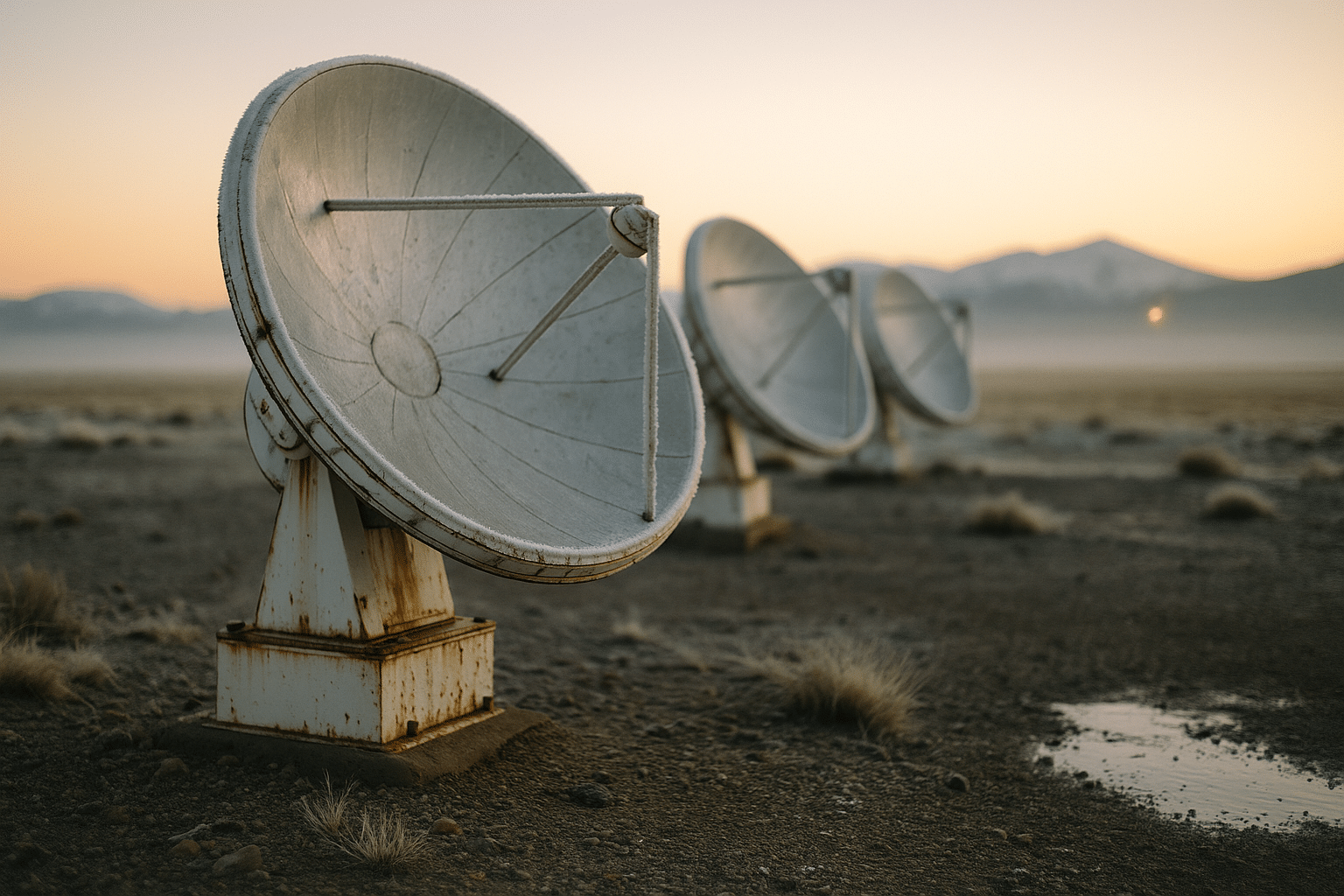

Connectivity: Fiber, Terrestrial Wireless, and Orbit

Computing without connectivity is a superb engine on an island. Modern systems depend on links that are faster, denser, and more diverse than ever: glass under streets, radios across cities, and satellites moving quietly above. Each has distinct strengths; together they form a resilient fabric that keeps services responsive and reachable.

Fiber is the backbone. Signals in glass face low loss and high capacity, with single strands carrying many channels via multiplexing. For metro and backbone routes, fiber offers predictable latency with minimal jitter, making it ideal for synchronization and bulk data movement. Its constraint is geography: trenching streets and crossing difficult terrain takes time and capital, so last-mile connectivity often blends other media.

Terrestrial wireless fills those gaps. Fifth-generation mobile networks combine wide coverage with low-latency targets, and private deployments allow factories and campuses to reserve spectrum for deterministic behavior. Higher frequencies deliver very high throughput at short range, while lower bands travel farther and penetrate walls. Newer generations of wireless local networks push multi-gigabit speeds in common spaces and homes, with better spectrum sharing and scheduling to reduce contention. Typical round-trip latencies range from single-digit milliseconds on local links to a few tens of milliseconds across regional paths.

Space-based links complete the picture. Low-orbit constellations shrink round-trip times to a few tens of milliseconds—much closer to terrestrial routes than older, higher orbits—while covering oceans and sparsely populated regions. This enables remote monitoring, emergency connectivity, and backup paths when fiber or terrestrial links go dark. Weather, terminal alignment, and regulatory constraints still matter, so design for failover: automatically reroute around congestion, and keep local caches to serve critical reads.

Network-aware applications outperform network-agnostic ones. Practical patterns include:

– Multihoming: bond cellular, fixed wireless, and fiber for continuity.

– Adaptive bitrate and model selection: tailor fidelity to current conditions.

– Edge caches and regional mirrors: place hot data close to demand.

– Observability at the packet and session layers: detect jitter and loss early.

– Security by default: encrypt end-to-end and rotate keys automatically.

The outcome is not just speed; it is reliability under stress. Systems that anticipate variability—by protocol choice, buffering strategy, and routing policy—retain composure when the unpredictable happens.

A Pragmatic Roadmap for Builders and Decision-Makers

The difference between a flashy pilot and a durable platform is method. Teams that deliver repeated wins share habits: they define outcomes crisply, instrument relentlessly, and scale only what proves valuable. The following roadmap turns that ethos into steps you can apply across domains, from intelligent assistants to connected machines.

Start with discovery that ends in numbers. Instead of “improve responsiveness,” write “reduce p95 latency from 180 ms to 90 ms while holding error rates below 0.5%.” Tie quality to user value: fewer abandoned sessions, faster task completion, lower support tickets. Map dependencies across compute placement, model choices, and connectivity. Identify guardrails for safety, privacy, and cost before you write code.

Prototype with constraints. Use a small slice of real data and realistic limits on power, memory, and bandwidth. Prefer simple baselines; they illuminate what complex approaches must beat. Add observability immediately: trace IDs, structured logs, and metrics for latency, throughput, cost per action, and energy per action. Establish a feedback channel with pilot users, and log qualitative notes alongside quantitative dashboards.

Scale deliberately. Automate tests that cover accuracy, drift, security posture, and performance under load. Treat models, prompts, and retrieval indexes as versioned artifacts. Roll out changes gradually—canary first, then expand by cohort—and keep a hard rollback button. Update documentation as part of the deployment, not after.

Invest in people and process. Cross-train developers and operators so ownership does not split at the worst possible moment. Build a review ritual where incidents become learning, not blame. Maintain a register of risks with owners and mitigation plans. For procurement, score vendors and components on interoperability, repairability, and transparency; negotiate service levels that measure what users feel, not just what dashboards show.

Keep the playbook visible:

– Outcomes over outputs: define success in user terms.

– Small, well-instrumented steps: observe, learn, and iterate.

– Right compute in the right place: device, edge, cloud by need.

– Responsible by design: privacy, safety, fairness as defaults.

– Measure total cost of ownership, including energy and lifespan.

Summary: Technology rewards curiosity paired with discipline. If you anchor decisions in clear goals, choose architectures that match constraints, and cultivate steady learning, you will ship systems that feel modern today and remain dependable tomorrow.