Exploring Technology: Innovations and tech advancements.

Outline:

– Why technology’s pace and pervasiveness matter now

– The data and cloud backbone: costs, control, and architecture trade‑offs

– AI beyond buzzwords: practical patterns, constraints, and outcomes

– Computing at the edge: sensors, security, and real-time decisions

– A responsible, resilient roadmap: skills, governance, and strategy

Why Technology’s Pace and Pervasiveness Matter

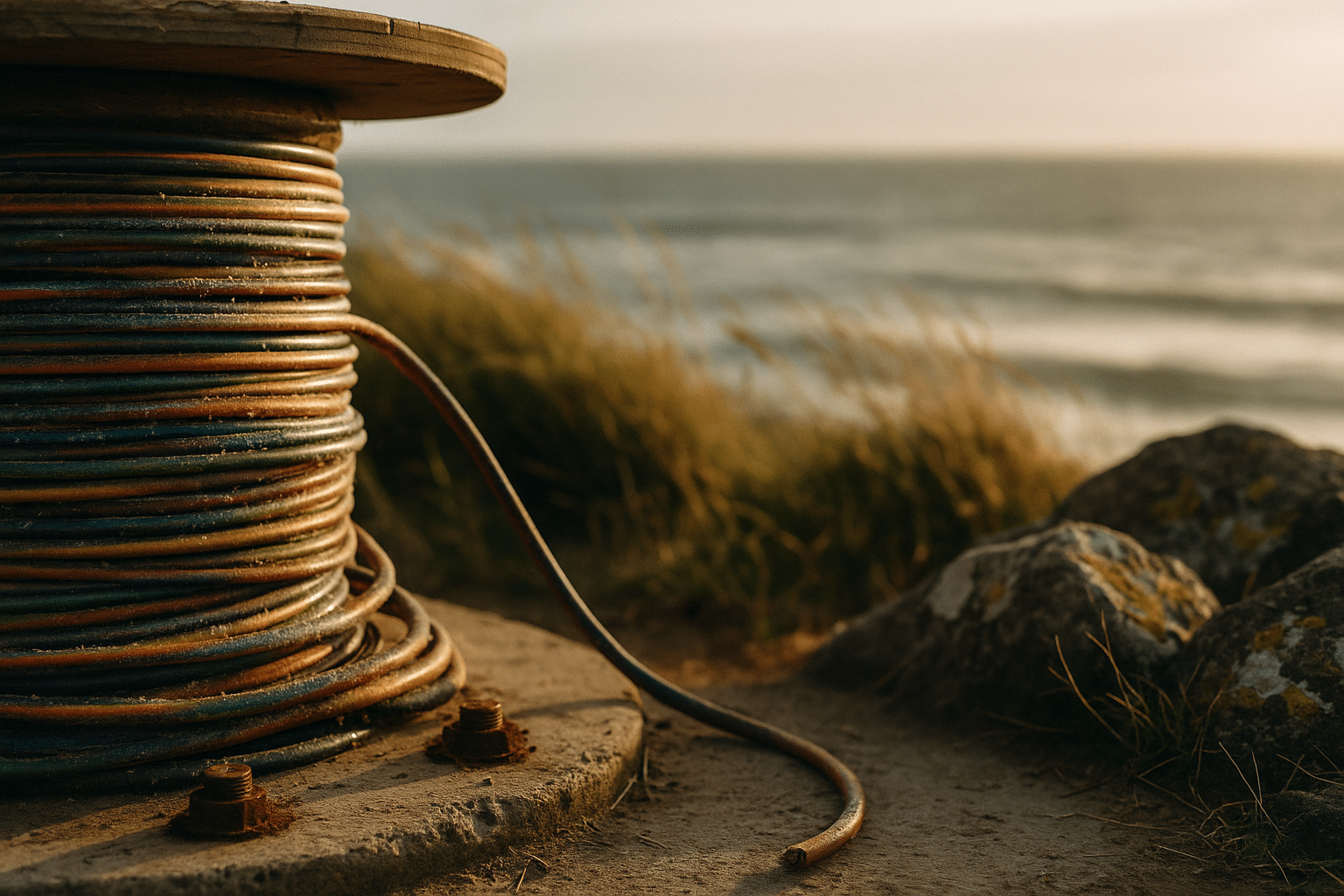

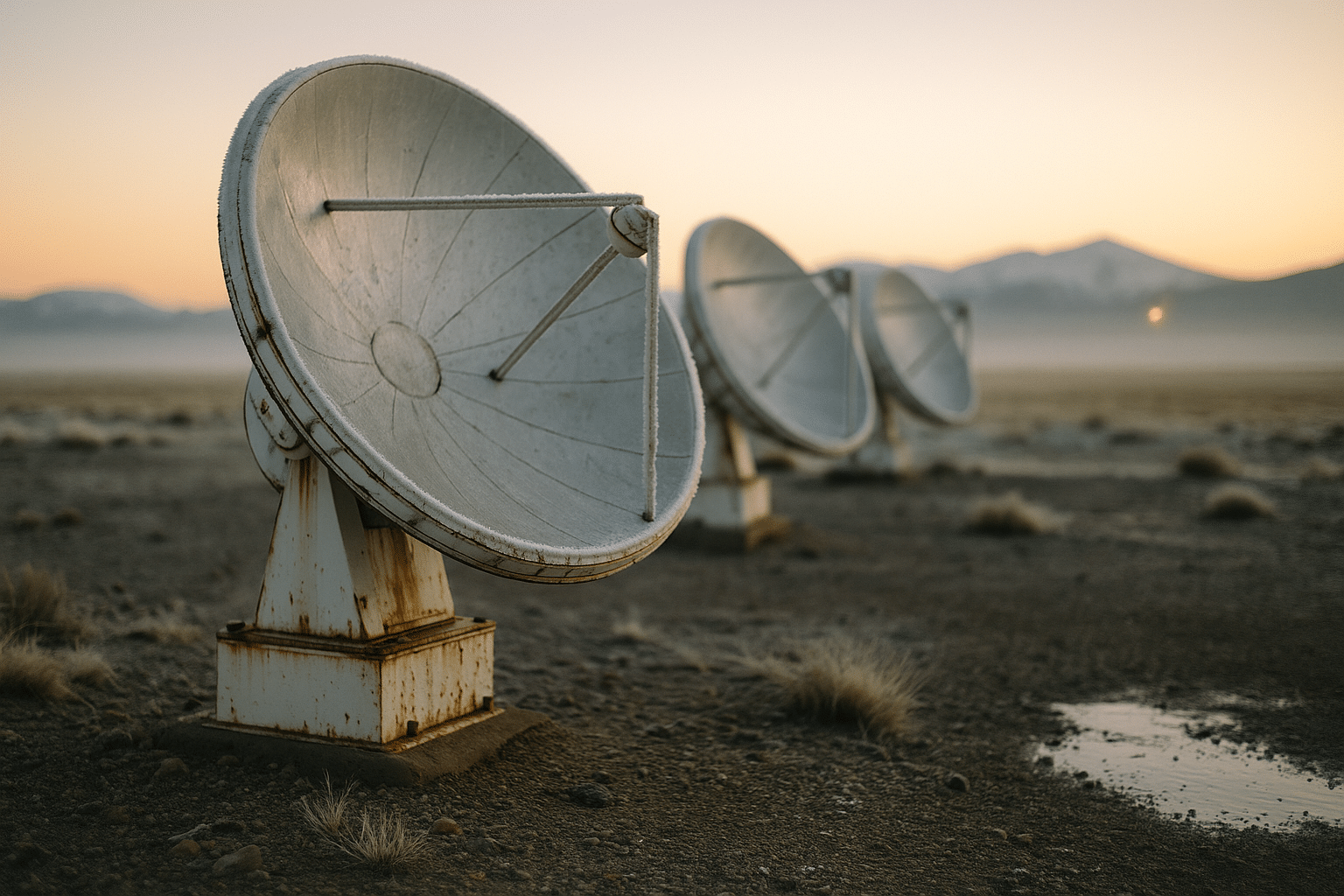

Technology is the quiet infrastructure under our routines, the metronome that sets the tempo for commerce, communication, and creativity. It now spans an arc from satellites beaming weather models to tiny sensors timing irrigation in orchards. Consider the sheer volume of data humans and machines produce each day; analysts have charted growth from terabytes to petabytes to zettabytes within a generation, and the curve still climbs. That expansion changes what’s possible, but also what’s prudent: more data can sharpen decisions while amplifying risks if collected or used carelessly. Understanding where value concentrates—compute, connectivity, or content—has become a leadership skill, not just a technical one.

Three impulses shape the moment. First, digitization: more processes encoded as software, from supply chains to service desks. Second, instrumentation: physical spaces acquiring senses through cameras, meters, and embedded chips. Third, automation: rules and models translating inputs into actions with minimal human intervention. When combined, these forces create compounding effects. A logistics hub that measures dwell time, predicts bottlenecks, and automatically reroutes shipments doesn’t merely save minutes; it recalibrates expectations for customers and competitors alike.

Still, speed without direction can be costly. Decision-makers face classic trade-offs that technology only reframes, not eliminates: agility versus stability, openness versus control, centralization versus autonomy. The practical path is to build capabilities that are resilient to change: adaptable data models, interoperable interfaces, transparent governance, and security that assumes failure rather than perfection. A helpful mental model is to invest along three horizons: operations that must never fail, experiments that may fail cheaply, and learning loops that ensure failures teach quickly.

For readers navigating careers or roadmaps, the signal is clear. Technology is no longer delivered to the edge of the organization; it is the organization’s edge. Momentum accrues to teams that can integrate disciplines—product, data, risk, and design—while keeping outcomes measurable. Useful starter prompts include: – What decision are we trying to improve? – What data truly reflects that decision? – What latency and accuracy are acceptable? – How will we know this is working in 90 days? – What happens when it doesn’t?

The Data and Cloud Backbone: Architecture, Costs, and Control

Behind every visible app sits an invisible choreography of storage tiers, networks, and services that shuttle bits efficiently and (ideally) securely. Public cloud platforms have grown for years at a pace that outstrips many IT budgets, driven by convenience, global reach, and rapid provisioning. Yet the bill is a blend of compute hours, input/output operations, data egress, and managed service premiums that can balloon as workloads scale. The response is not a blanket rule but thoughtful placement: match each workload to the environment where its economics, governance, and performance align.

Think in terms of gravity. Data tends to anchor applications nearby because moving large datasets is slow and expensive. If your analytics pipeline reads the same dataset thousands of times daily, co-locating storage and compute reduces chatter and cost. Conversely, content delivery near users trims latency and smooths spikes. Some organizations are building hybrid fabrics—mixing centralized clouds, regional data centers, and on-premise clusters—so that sensitive records remain in controlled environments while elastic tasks burst to utility compute during peaks. The tools to orchestrate these fabrics are maturing, but the operating model must mature with them.

Cost clarity is a capability. Teams that routinely tag resources, forecast usage, and right-size instances report fewer surprises. Practical moves include: – Establish budgets per service and automate alerts when thresholds near. – Reserve capacity for predictable baselines; keep on-demand headroom for bursts. – Compress and partition data with query patterns in mind, not just storage savings. – Prefer open data formats to avoid lock-in and simplify migrations. – Design clear data lifecycle policies so cold data doesn’t ride hot tiers.

Security travels with architecture choices. Centralization eases policy enforcement but can create tempting single targets. Distribution reduces blast radius but raises coordination complexity. Zero-trust patterns, least-privilege roles, immutable logs, and regular incident simulations help in any topology. Regulation adds another layer: residency requirements, sector rules, and audit trails. The safest approach is transparency—document flows, justify retention, and verify controls. In short, the backbone is not just infrastructure; it’s a portfolio balancing performance, cost, compliance, and future flexibility.

AI in Practice: Model Choices, Constraints, and Measurable Outcomes

Artificial intelligence has leapt from research labs into daily workflows, but its impact depends on grounded choices. Start with the problem, not the model. Classification, ranking, forecasting, summarization, and generation each reward different architectures and data regimes. Large models can generalize across tasks and languages but often demand greater compute, careful prompt design, and guardrails to reduce off-target outputs. Smaller or specialized models trade breadth for speed, privacy, and cost control—appealing when latency targets are tight or data cannot leave a device or region.

Robust AI delivery is a pipeline, not a demo. Data collection needs consent and clarity; labeling benefits from well-written guidelines; training requires reproducibility; evaluation demands metrics tied to business outcomes. A practical scorecard might track: – Accuracy or recall appropriate to the task. – Latency at the 95th percentile, not just the average. – Cost per successful prediction or generated artifact. – Drift indicators showing when performance slips. – Safety checks against restricted content or high-risk use. This kind of instrumentation turns “AI” from a buzzword into a service you can manage.

There are also hard edges. Models interpolate from examples and can overfit, underfit, or hallucinate when data is sparse or prompts are ambiguous. Sensitive domains—healthcare, finance, public safety—require explainability, auditability, and human oversight. Privacy-enhancing techniques such as differential privacy, federated learning, or synthetic data can reduce risk where raw data is constrained. At deployment, caching, distillation, and retrieval help balance quality and speed: retrieval adds context, distillation compresses capability into lighter models, and caching avoids recomputing frequent answers.

Finally, align incentives. If a content team is measured on quality and compliance while an engineering team is measured solely on output volume, misalignment will manifest as rework. Bring multidiscipline stakeholders into early design reviews. Define redlines (uses you will not ship) and escalation paths (what happens when outputs misbehave). Write documentation as if the future team inheriting your system has none of your context—because one day that will be true. The result is AI that serves users reliably, not theatrically.

Edge, IoT, and Real‑Time Decisions Where Work Happens

Much of the world’s work does not occur in glass-walled offices; it happens on factory floors, in fields, along roads, and across supply depots. That is where edge computing and the Internet of Things translate bits into bolts and back again. By placing compute near sensors and actuators, organizations cut latency, preserve bandwidth, and continue operating even when backhaul links falter. The effect is practical: a camera that flags a safety hazard in milliseconds, a pump that self-adjusts to reduce energy draw, a shelf that reorders only what sells.

However, edge value emerges from systems thinking. Devices must be provisioned securely, identities rotated, firmware updated, and data curated before it enters analytics pipelines. Short bursts of noisy telemetry benefit from filtering and feature extraction locally, sending only signals that matter upstream. Architectures often look like layered sieves: raw events at the periphery, aggregated insights in regional nodes, and long-term learning in centralized training environments. Each layer narrows volume while amplifying meaning.

Use cases illustrate the range. In manufacturing, vibration and temperature readings correlate with equipment wear; models predict failure hours or days ahead, scheduling maintenance in quieter windows. In agriculture, soil moisture, weather, and imagery guide irrigation and spraying, conserving resources while improving yield. In mobility, roadside units talk to vehicles to coordinate flows and warn of hazards. For facilities, smart meters track consumption patterns and trigger demand response during grid stress. These are not distant hypotheticals; pilot-to-production pipelines have matured enough that return on investment can be observed within quarters when scoped well.

Security merits extra emphasis because the edge is messy. Devices get lost, tampered with, or misconfigured. Helpful patterns include: – Secure boot and signed firmware. – Network segmentation so compromised devices cannot roam. – Local secrets vaults rather than hardcoded keys. – Health checks that quarantine misbehaving nodes. – Event logs mirrored to append-only storage. The human layer matters too: clear runbooks for field technicians, spare units for rapid swap, and dashboards that fit on small screens in harsh light. Real-time decisions demand real-world empathy.

Conclusion: A Responsible, Resilient Roadmap for the Next Decade

The through-line across data backbones, AI services, and edge deployments is governance that accelerates rather than obstructs. Good governance clarifies goals, aligns incentives, and makes safe paths the easy paths. For executives, that means tying technology bets to value hypotheses and testable metrics. For product leaders, it means defining target users, acceptable latency windows, and measurable quality. For engineers, it means building observability into the fabric and treating documentation as a deliverable. For risk and compliance teams, it means shifting from point-in-time audits to continuous assurance with transparent logs and repeatable checks.

A practical playbook starts small and compounds. Pick one decision worth improving—forecasting demand variability, triaging support tickets, or scheduling maintenance. Observe it, measure it, and redesign it with data and automation. Then institutionalize what worked: – Shared taxonomies so teams mean the same thing when they say “customer” or “order.” – Reusable components for ingestion, monitoring, and access control. – Training programs that pair theory with the tools staff actually use. – Post-implementation reviews that capture wins and missteps in equal detail. Momentum grows when the system makes doing the right thing routine.

Resilience is as much about culture as code. Expect surprises—supply shocks, new regulations, model drift, shifting user behavior—and rehearse responses. Mix horizons: keep a portfolio of sure bets, promising pilots, and exploratory research. Watch cost curves and constraints: compute per watt, storage per gigabyte, bandwidth per megabyte, and latency budgets from device to cloud and back. Design for recoverability rather than pretending outages will never occur. Celebrate teams that surface risks early and learn in public; that habit prevents expensive silence.

Above all, keep users in focus. The most admired systems disappear into the background, freeing people to do more meaningful work. If you carry forward a single heuristic, make it this: clarify the decision, constrain the problem, instrument the system, and iterate openly. Do that consistently and technology becomes more than a stack; it becomes a trustworthy partner that scales your judgment without eclipsing it.