Exploring Science: Latest discoveries and advancements in Science.

Outline

– Why science and technology advances matter now

– Edge AI and ambient intelligence

– Quantum and high-performance computing

– Biotech and health technology

– Clean energy and new materials

– Conclusion: Navigating the next decade

Introduction

Technology is how science gets traction in the real world. From chips that crunch data at the edge to materials that sip sunlight with remarkable efficiency, today’s breakthroughs are less about isolated eureka moments and more about systems that work together. The result is a shift from lab-bench novelty to field-tested tools that cut latency, reduce waste, and open new frontiers for medicine, computing, and climate resilience. In other words, progress now looks like meaningful integration.

This article connects recent advances to the choices readers make—what to learn, where to invest attention, and how to separate signal from hype. Each section blends plain-language explanations with comparisons, indicative figures, and concrete examples. You will see what is emerging, what is ready enough to matter, and what questions to ask before adopting or advocating for a new approach.

Edge AI and Ambient Intelligence: From Cloud-Only to Here-and-Now

Artificial intelligence has matured from a cloud-centric model into a layered ecosystem that includes powerful, efficient computing at the edge. On-device accelerators now reach tens of trillions of operations per second at single-digit watts, enabling inference for speech, vision, and sensor fusion without constant network trips. This shift is not cosmetic; it rewires latency, privacy, and cost. When a camera can detect defects on a factory line in less than 30 milliseconds locally, or an agricultural sensor can grade crop stress in the field, value accrues where data originates.

Three technical levers make this possible. First, quantization reduces model precision from 32-bit to 8-bit or even 4-bit while preserving accuracy in many tasks, cutting memory footprints by 4–8x and shrinking energy use. Second, sparsity exploits the fact that many neural network weights or activations are effectively zero, producing 2–3x throughput gains on compatible hardware. Third, distillation compresses larger models into compact learners that retain behavior with far fewer parameters, often within a few percentage points of baseline accuracy. Combined, these methods turn heavyweight models into nimble ones ready for constrained devices.

Edge AI also intersects with resilience and privacy. Local processing maintains functionality during outages and minimizes exposure of raw data. That matters for health monitoring, industrial controls, and smart buildings, where telemetry can be sensitive. In federated learning setups, devices train locally and share gradients or model updates rather than source data, delivering accuracy that can sit within 1–2% of centralized training for many workloads while reducing leakage risk. When privacy regulations restrict data movement, that margin is the difference between launch and limbo.

Practical benefits show up across sectors:

– Manufacturing: Inline quality inspection, predictive maintenance using vibration and acoustic signatures, and autonomous material handling.

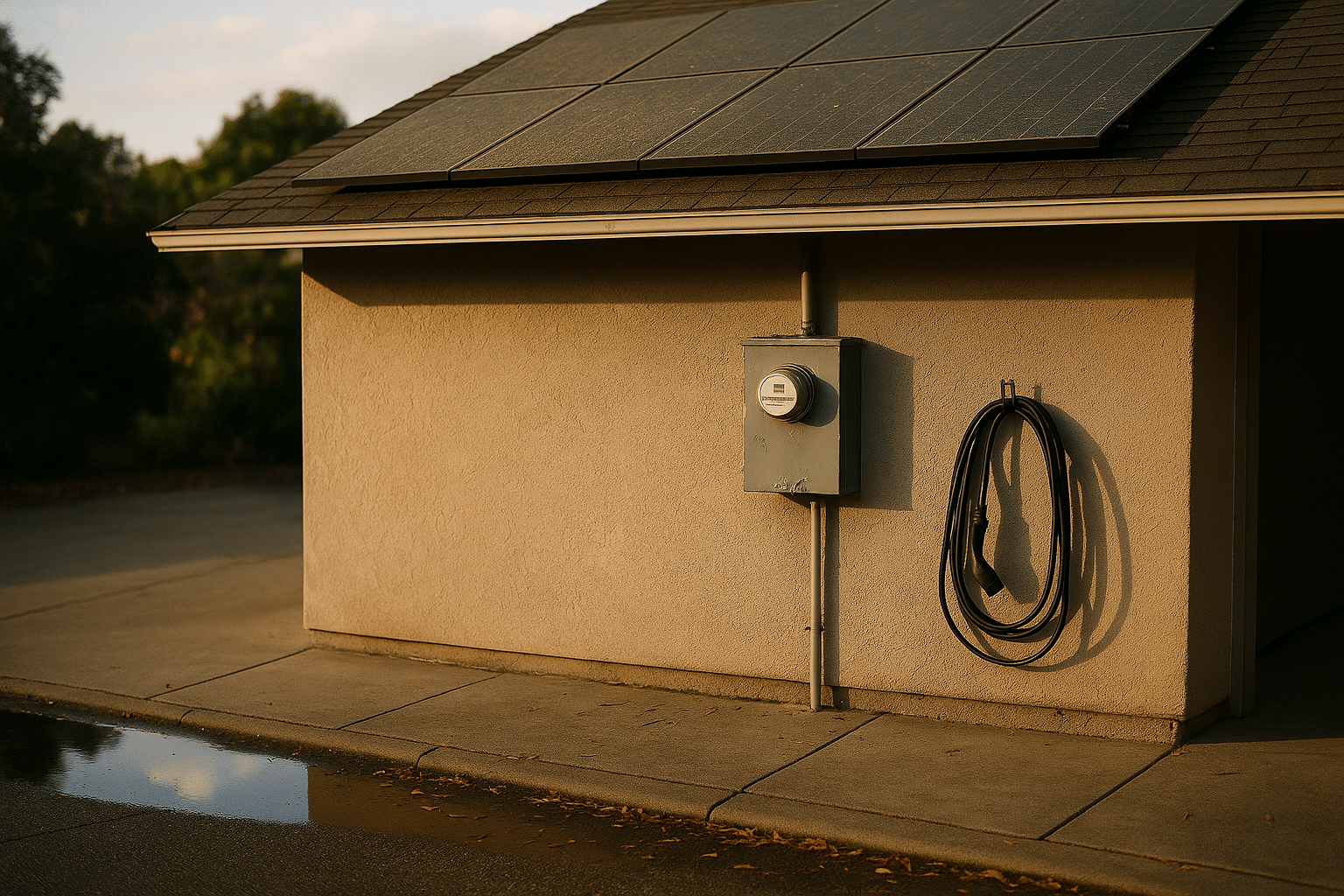

– Energy: Microgrid balancing, fault detection in distribution lines, and rooftop solar optimization based on irradiance and temperature.

– Mobility: Driver assistance that fuses camera, radar, and lidar on-board, with sub-50-millisecond decision loops.

– Retail and logistics: Shelf analytics and asset tracking that work without continuous connectivity.

Trade-offs remain. Edge deployments require lifecycle updates, secure boot chains, and attestation to prevent tampering. Model drift calls for monitoring and occasional retraining. Most systems still blend edge and cloud: quick reflexes happen locally, while trend discovery and heavy training live centrally. The winning pattern is hybrid by design, with clear data flows and cost-aware placement of computation.

Quantum and High-Performance Computing: Complementary Paths to New Capabilities

Breakthroughs in computing are arriving on two fronts: exascale-class supercomputers built on classical architectures and emerging quantum devices targeting problems that resist traditional methods. Exascale systems, capable of around 10^18 floating-point operations per second, are expanding what is tractable in climate modeling, materials discovery, and fluid dynamics. Higher parallelism and faster interconnects mean researchers can simulate finer meshes, longer time horizons, and more coupled variables without collapsing run-time budgets.

Quantum hardware, though still early, has moved from proof-of-concept to controlled experimentation on useful subproblems. Individual qubit fidelities exceeding 99% are common in leading platforms, with coherence times adequate for modest-depth circuits. Experiments with hundreds to thousands of interacting atoms or superconducting elements demonstrate scaling potential, while error-mitigation techniques and early error-correction codes reduce noise enough to extract meaningful signals in niche tasks. The realistic picture is hybrid: quantum subroutines handle combinatorial kernels, while classical systems manage orchestration, pre- and post-processing, and verification.

Where does this duo matter today? Consider three domains. In chemistry, variational algorithms can approximate ground-state energies for small molecules with resource counts that are trending downward, guiding catalyst screening. In optimization, quantum-inspired heuristics and annealing-like methods already deliver practical speedups on classical hardware for routing and scheduling, a sign that insights transfer even before full-scale quantum advantage. In machine learning, kernelized quantum approaches hint at new feature spaces; although datasets remain small, these studies suggest pathways to richer representations.

Comparisons help sort substance from spectacle:

– Maturity: Classical exascale is production-grade; quantum is pre-commercial for general use but valuable in research and selected pilots.

– Error handling: Classical methods rely on deterministic arithmetic; quantum requires active suppression and correction of stochastic noise.

– Ecosystem: Classical software stacks are industrial-strength; quantum tooling is improving rapidly but fragmented across hardware modalities.

The prudent strategy is dual-track. Teams should port heavy simulations to modern parallel frameworks to harvest near-term gains, while running scoped quantum experiments tied to concrete metrics—circuit depth, two-qubit error rates, and end-to-end task quality. Budget for expertise in both linear algebra at scale and quantum information basics; the interface between them is where the most compelling progress is likely to appear first.

Biotechnology and Health Tech: Faster Loops from Insight to Intervention

Biotech is compressing the distance between discovery and deployment. Programmable gene editing has moved from blunt cuts to base and prime edits with improved specificity, reducing off-target effects in preclinical studies. Messenger-RNA platforms have shown that bespoke therapeutics can be designed and manufactured on timelines measured in weeks, a capability now being explored for infections, oncology, and rare diseases. Meanwhile, structure-prediction models provide near-experimental accuracy for many proteins, feeding pipelines that propose candidates for binding and function before costly wet-lab cycles.

On the diagnostic front, multi-omic assays integrate genomics, transcriptomics, and proteomics to produce richer signatures. Combined with machine learning, these profiles stratify patients more finely, guiding dosing and inclusion criteria. Wearable devices collect continuous streams of heart rate, movement, sleep staging, and peripheral oxygenation. In validation cohorts, some algorithms have matched or approached clinical-grade thresholds for arrhythmia detection and sleep classification, especially when corroborated by confirmatory testing. The shift is toward longitudinal, context-aware health, rather than sporadic snapshots.

Clinical translation depends on rigorous evidence and governance. Synthetic control arms can reduce the number of participants needed for some trials, but only when data provenance and model audit trails are impeccable. Privacy-preserving analytics, including secure enclaves and federated learning, allow multi-institution studies while keeping raw records local. Regulators now expect not only efficacy but also post-market monitoring plans, drift detection for algorithms, and patient-friendly explanations of how models influence decisions.

Areas where impact is accumulating:

– Drug discovery: Triage of compound libraries using learned embeddings, narrowing wet-lab focus to the most promising few percent.

– Imaging: Ultra-low-dose reconstruction in radiology that maintains diagnostic quality, reducing exposure in vulnerable populations.

– Hospital operations: Forecasting bed occupancy and staffing needs from seasonal and local signals, improving throughput and care continuity.

– Public health: Wastewater surveillance that detects pathogen trends days to weeks ahead of clinical case surges.

Careful comparison prevents overclaiming. A biomarker that shines in a lab may falter in diverse real-world settings; fairness audits across age, ancestry, and comorbidity profiles are essential. Likewise, a digital therapeutic must prove durable engagement and net clinical benefit, not only short-term adherence. The throughline is disciplined iteration: preprint to peer review, pilot to randomized trial, and prototype dashboard to fully integrated clinical workflow.

Clean Energy and New Materials: Efficiency, Storage, and Systems Thinking

Energy innovation is no longer a single-technology story; it is an orchestration challenge that blends generation, storage, conversion, and demand-side flexibility. Solar and wind have grown from niche to central pillars in many regions, with levelized costs that undercut fossil incumbents in favorable conditions. Tandem solar cells combining perovskites with silicon have achieved lab efficiencies above 30%, hinting at modules that push well past conventional single-junction limits once stability and scaling hurdles are addressed. Field reliability remains the gating factor, but accelerated aging tests show steady improvements, with thousands of hours of illumination and thermal cycling now routine in evaluations.

Storage is diversifying. Lithium-iron-phosphate packs offer robust cycle life and safety with energy densities typically around 150–200 Wh/kg at the cell level, making them attractive for stationary use and cost-sensitive mobility. Sodium-ion chemistries trade energy density for abundant materials and promising cold-weather behavior, targeting 120–160 Wh/kg cells in early deployments. Flow batteries, while bulky, decouple power from energy and suit multi-hour balancing where footprint is less constrained. At the pack scale, large purchases have trended toward costs in the low hundreds of dollars per kilowatt-hour, and continued manufacturing learning rates suggest further declines, subject to commodity price swings.

Heat and molecules matter as much as electrons. High-efficiency heat pumps deliver coefficients of performance between roughly 2.5 and 4.5 depending on temperature lift, transforming how buildings are heated and cooled. In industry, electrification and thermal storage can shave peak loads and capture waste heat. Green hydrogen, produced by electrolyzers that have seen substantial cost reductions over the past decade, is being tested for steelmaking and long-duration storage. Lifecycle analyses remain critical: upstream emissions, water use, and land impacts vary widely by design and geography.

New materials underpin many gains:

– Structural: Advanced composites and high-strength alloys reduce mass in vehicles and turbines without sacrificing durability.

– Electrochemical: Solid-state electrolytes promise improved safety and higher energy density if interfacial resistance and manufacturability can be tamed.

– 2D and quantum materials: Exceptional electron mobility and tunable properties open doors in sensing and low-power logic, provided integration with standard fabrication lines advances.

Systems thinking ties it together. Granular forecasting aligns variable generation with flexible loads. Distribution grids benefit from high-fidelity monitoring, edge analytics, and protective relays that adapt in milliseconds. Policy design, market rules, and interconnection queues are as decisive as any lab breakthrough. The credible path forward blends better devices with smarter coordination, yielding reliability, affordability, and emissions cuts at the same time.

Navigating the Next Decade: A Practical Roadmap for Curious Readers and Practitioners

Big advances invite bold claims, so a grounded approach is essential. Start with a simple filter: does the technology reduce a core constraint—cost, latency, energy, error rate—or does it merely shift it elsewhere? If it moves a hard boundary inward while keeping trade-offs explicit, it is worth deeper attention. If not, treat it as an interesting prototype and wait for clearer data.

Use a signal checklist:

– Evidence: Prefer peer-reviewed studies or independently audited benchmarks over isolated demos. Look for confidence intervals, ablation studies, and real-world datasets.

– Scale path: Map the journey from lab to production—supply chains, maintenance, recyclability, and operator training. Elegant science that ignores operations rarely sustains impact.

– Governance: Assess privacy, safety, and environmental safeguards from the outset. Retrofitting trust is expensive and sometimes impossible.

– Economics: Compare total cost of ownership, not sticker price. Energy, upkeep, downtime, and compliance often dominate lifetime value.

– Interoperability: Favor open standards and modular designs that reduce lock-in and allow incremental upgrades.

For individuals building careers, stack skills in layers that mirror the tech itself. Pair statistical literacy with systems thinking, model-building with data stewardship, and domain knowledge with computational tools. Practice reading documentation and release notes critically; seemingly minor changes in defaults or calibration can flip results. When possible, run small pilots and write postmortems—what surprised you, what failed gracefully, and what failed dangerously.

Organizations can codify learning loops. Establish sandboxes for controlled trials, create decision gates tied to metrics rather than hype cycles, and invest in observability so real-world performance feeds back into design. Keep an eye on adjacent fields; breakthroughs often jump tracks, as with AI accelerating materials discovery or power electronics reshaping industrial processes. Most importantly, cultivate patience without complacency: steady compounding in the right direction beats sporadic moonshots that never land.

The future will not arrive as a single revelation but as a mosaic of steady improvements. By asking precise questions, insisting on durable evidence, and aligning incentives with outcomes, readers can help steer discovery into dependable progress that improves daily life.