Exploring Science: Latest discoveries and advancements in Science.

Outline of the Article

This outline previews the journey ahead and clarifies how each section builds a complete picture of how technology is transforming scientific discovery. Readers will first see the map, then walk the terrain. The structure blends rigorous analysis with down‑to‑earth examples so you can connect headline breakthroughs to practical implications, whether you work in a lab, lead a product team, teach students, or simply enjoy learning how the world works.

Section-by-section guide:

– Section 1: Outline of the Article — What to expect, why this order matters, and how the pieces fit together across computing, biology, materials, climate, and space.

– Section 2: Technology’s New Chapter in Scientific Discovery — A big-picture introduction to converging trends: cheaper sensors, faster compute, abundant data, and collaborative platforms that compress discovery cycles.

– Section 3: Computation and AI as New Engines of Discovery — How simulation, automation, and pattern-finding tools accelerate hypotheses, experiments, and interpretation, with concrete use cases and cautions.

– Section 4: Bio, Materials, and Quantum on Fast-Forward — A tour of labs where gene editing, self-assembling compounds, and quantum effects are turning once‑theoretical ideas into measurable results.

– Section 5: Earth, Space, and the Road Ahead — Satellites, climate tech, and a reader‑focused conclusion translating advances into skills, decisions, and next steps.

What you will gain:

– A mental model for why progress appears to arrive in bursts, and how enabling layers (data, compute, instrumentation) stack.

– Benchmarks and examples that make abstract claims tangible, including orders‑of‑magnitude improvements and real operational constraints.

– Practical takeaways for careers, learning paths, and responsible adoption so technology becomes a lever for better outcomes, not a source of avoidable risk.

Why this outline first? A clear route prevents getting lost in headlines. By setting expectations up front, you can compare developments on a like‑for‑like basis: What improved, by how much, at what cost, and for whom? Keep those four questions in mind; they anchor the analysis that follows and help separate signal from noise. Now that the compass is set, let’s step into the landscape.

Technology’s New Chapter in Scientific Discovery

Every era of science has its signature instruments. Today’s instruments are not only microscopes, telescopes, and spectrometers; they are also software models, robotic pipettes, and fleets of networked sensors. Three trends converge: data volume that has surged past one hundred zettabytes globally, compute that now reaches exascale magnitudes, and components whose costs have fallen so far that precision tools fit in a backpack. The result is a faster loop from question to measurement to model to answer.

Consider how measurement has changed. High‑throughput devices can scan millions of cells or materials variants in hours. Environmental monitors stitch together readings from mountains, oceans, and cities to track changes in real time. In the past, a single experiment might take a season; now, an automated workflow can run thousands of parameter sweeps overnight and surface the handful that truly matter. That speed does not guarantee truth, but it shortens the path to careful verification.

Computation transforms that raw sensing into insight. Digital twins replicate physical systems—reactors, batteries, even watersheds—so researchers can run “what‑ifs” that would be impractical or unsafe in the field. As hardware density increases and algorithms grow more efficient, simulations align more closely with reality, provided inputs are clean and boundary conditions are understood. When the model diverges from measurements, the discrepancy itself becomes a clue, pointing to missing physics, overlooked interactions, or faulty assumptions.

Collaboration finishes the picture. Shared datasets, reproducible notebooks, and pre‑registered protocols make it easier to build on others’ work without starting from scratch. The cultural shift is as important as the technical one: teams now span disciplines that once spoke different languages. A biologist, a materials scientist, and a data engineer can co‑design an experiment where automation handles the drudgery and each expert focuses on interpretation. The promise is not hype; it is a widening toolkit, wielded thoughtfully, that helps us ask better questions and answer them with clarity.

Computation and AI as New Engines of Discovery

Computation has crossed thresholds that change what is measurable and knowable. Exascale‑class machines perform more than 10^18 operations per second, enabling climate ensembles with finer grids, plasma simulations with richer turbulence models, and materials searches spanning billions of candidates. Meanwhile, adaptive algorithms—ranging from reinforcement strategies to probabilistic programs—optimize experiments in flight, moving quickly toward conditions most likely to reveal new behavior. These are not abstract promises; they are daily practices in labs integrating software with hardware.

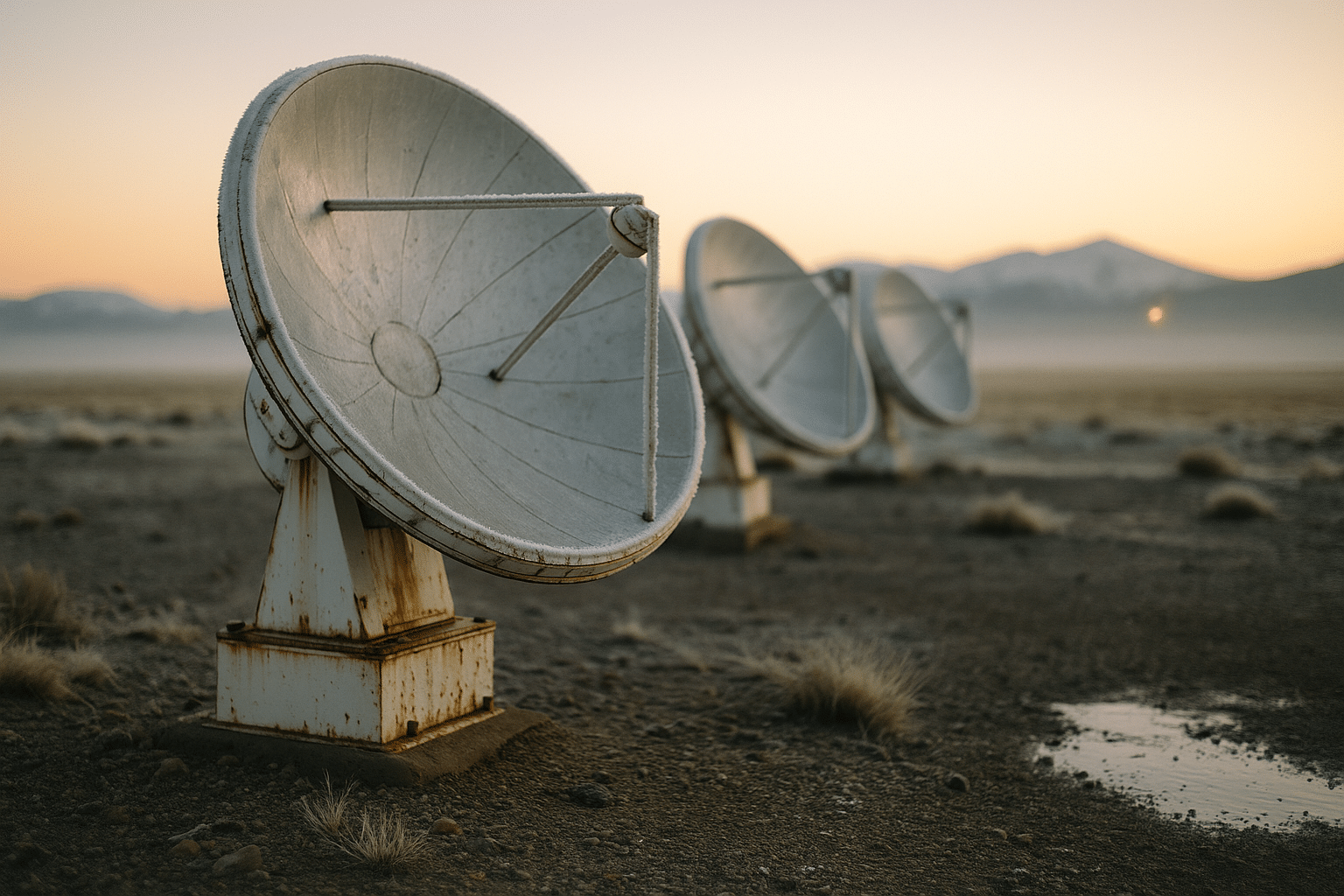

Where pattern‑rich data exists, learning systems accelerate discovery. In microscopy, models denoise images so faint structures become legible at lower exposure, reducing damage to delicate samples. In chemistry, predictive tools estimate reaction yields or propose synthesis routes that cut trial‑and‑error cycles. In earth observation, models classify land cover and quantify emissions, turning streams of satellite pixels into policy‑relevant numbers. Used carefully, such tools convert volumes of data into decisions with quantified uncertainty.

Representative applications include:

– Closed‑loop labs where code schedules instruments, updates hypotheses, and retries edge cases while researchers set guardrails and interpret results.

– Digital twins for infrastructure that assimilate sensor data, forecast failure probabilities, and evaluate retrofit scenarios before a wrench is lifted.

– Language‑based assistants that draft methods sections, parse literature, and suggest controls—useful aides that still require domain oversight.

Limits matter. Training large models can consume megawatt‑hours and emit tens to hundreds of tons of carbon dioxide, depending on energy sources and efficiency. Datasets may encode historical bias; unchecked, models can magnify it. Reproducibility demands versioned data, documented parameters, and independent validation against held‑out measurements. A practical checklist helps: define success metrics upfront, track energy use, audit for bias, and keep a human in the loop. With those safeguards, computation and AI behave like reliable lab partners—powerful, fast, and, when supervised, remarkably consistent.

Bio, Materials, and Quantum on Fast-Forward

Biology has become an information science as much as a wet science. The cost of reading a human genome has fallen by roughly a million‑fold since the early 2000s, and portable sequencers now support fieldwork in places once unreachable. Gene editing tools let researchers modify organisms with unprecedented precision, while single‑cell methods map how tissues grow, heal, and age. Automation shifts effort from pipetting to planning: a robotic line can test thousands of variants, and analytics flag the few worth deeper study.

Materials science rides a similar curve. Computational screening narrows vast compositional spaces before a gram of powder is mixed. Self‑assembling structures and high‑entropy alloys are engineered for strength‑to‑weight gains, corrosion resistance, or thermal stability. In energy, tandem solar cells that pair complementary absorbers have reported efficiencies near one‑third under lab conditions, and solid‑state battery prototypes target higher energy density with safety advantages. The path from prototype to product remains rugged—scaling, stability, and supply chains are the usual cliffs—but the vista is expanding.

Quantum technologies add a third accelerant. Though today’s general‑purpose devices are noisy and counted in hundreds to low thousands of controllable elements, steady improvements in coherence and error mitigation keep widening the set of tractable problems. Even before broadly useful quantum computation arrives, quantum sensing already contributes: gravimeters map subsurface features, magnetometers track neural activity without cryogenics, and clocks synchronized to exquisite precision anchor navigation beyond satellite coverage. Each of these tools complements classical approaches rather than replacing them outright.

Pragmatic takeaways:

– Expect more “biology‑as‑code” workflows, where design‑build‑test cycles iterate weekly, not yearly.

– Watch for materials tuned for recyclability and critical‑mineral thrift, reflecting circular‑economy constraints.

– Track quantum’s near‑term wins in sensing and communication while tempering expectations for general‑purpose computation in the short run.

Progress is not linear, and setbacks are normal: a promising perovskite can degrade in humidity; a gene therapy can face delivery hurdles; a quantum device can drift out of calibration. Yet the arc bends forward as tools mature, protocols standardize, and communities learn from each iteration.

Earth, Space, and the Road Ahead — What It Means for You

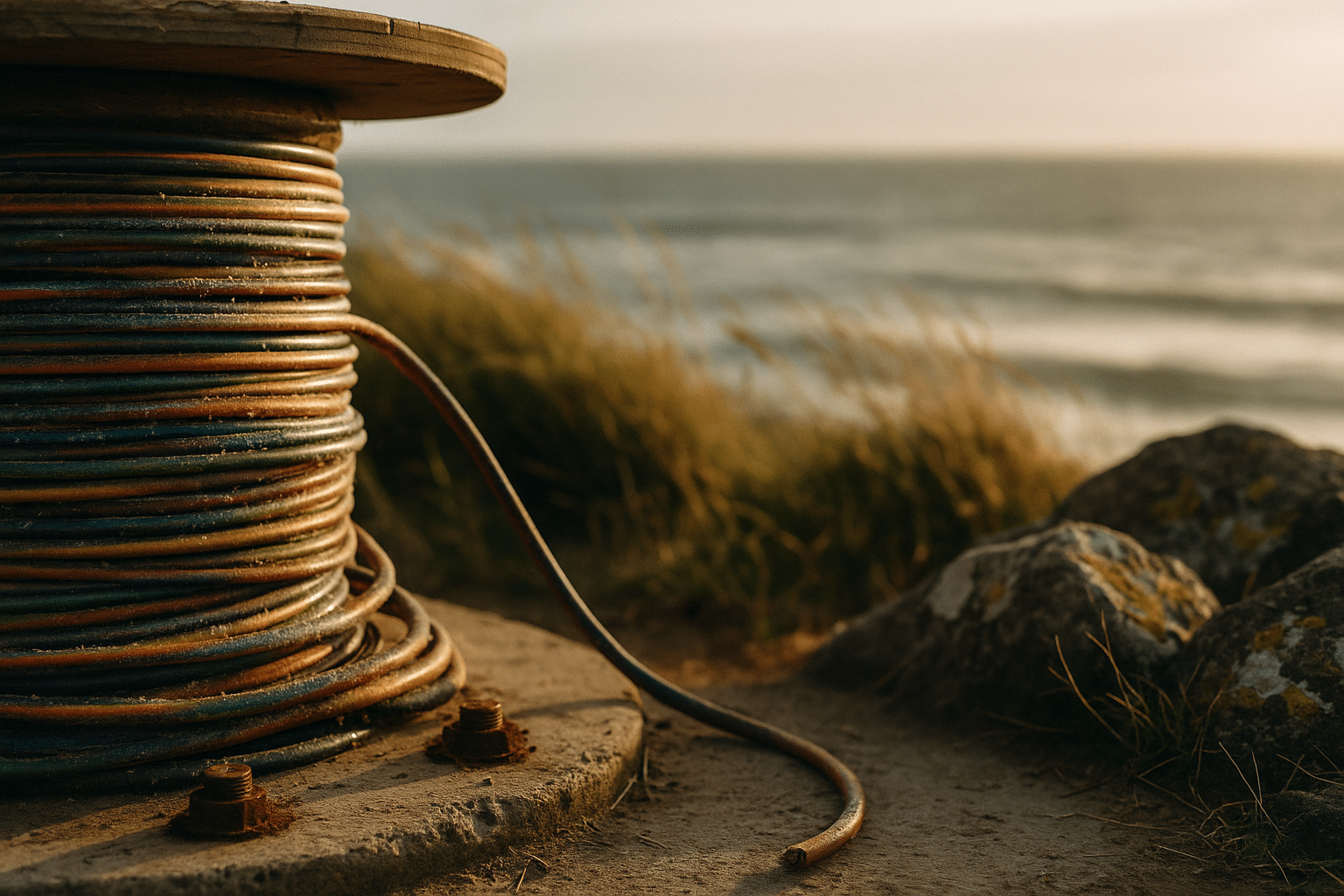

The planet has become instrumented, and the sky above it is stitched with sensors. The number of operational satellites now exceeds nine thousand, many forming constellations that revisit the same spot on Earth multiple times per day. Paired with ground networks, they track forest loss, crop health, wildfire smoke, urban heat, shipping lanes, and methane plumes. In parallel, renewable electricity has climbed to roughly one‑third of global generation when counting hydropower, with grid‑scale storage deployments growing quickly as costs fall and chemistries diversify.

These capabilities unlock new services. Farmers receive plot‑level guidance that blends weather, soil data, and crop models. Coastal cities simulate surge scenarios and redesign seawalls and wetlands accordingly. Logistics teams predict bottlenecks days ahead using traffic sensors and orbital imagery. Even fusion research—long viewed as a distant hope—has passed symbolic thresholds in inertial confinement experiments, demonstrating net energy gain in short pulses, a scientific milestone that still faces substantial engineering hurdles before practical power is feasible.

For readers deciding what to do next, consider three lenses:

– Skills: Pair domain expertise with data literacy. Learn enough statistics and modeling to ask sharper questions and spot pitfalls.

– Responsibility: Measure energy use, model uncertainty transparently, and plan for monitoring after deployment. Sustainable progress is designed in from day one.

– Strategy: Pilot quickly, evaluate honestly, and scale only when benefits and risks are quantified. Treat tools as tools—powerful, but never substitutes for judgment.

Conclusion for the curious and the busy alike: Technology is not a magic wand, but it is a remarkable multiplier. It lowers the cost of trying ideas, widens the space of possible solutions, and reveals patterns we could not see before. The pace can feel dizzying, yet the compass remains steady: ask clear questions, gather trustworthy data, reason with humility, and build with care. Do that, and you will not only keep up with the latest discoveries and advancements—you will help shape them.