Exploring Society: Innovations and tech advancements impact on society.

Introduction and Outline: Why Technology’s Social Impact Matters

Technology does not arrive as a single thunderclap; it seeps into habits, reshapes expectations, and redraws opportunity. In little more than a generation, high-speed networks, software-defined services, and machine learning have become routine, from a map that predicts traffic to a classroom that adapts to each learner. International estimates suggest that well over two-thirds of people are now connected to the internet, while a growing share rely on cloud-based tools daily. Yet the story is not only about access and speed; it is about how benefits are distributed, who bears the risks, and how communities keep agency as systems grow more complex.

To help readers navigate this terrain, this article offers a pragmatic map. We will keep the lens on social outcomes—jobs, inclusion, privacy, and sustainability—so every claim ties back to lived realities rather than abstract promises.

Outline of the journey ahead:

– Connectivity and access: how networks, devices, and affordability set the stage for participation.

– Automation and work: what machines do well, where humans excel, and how tasks—not just jobs—are changing.

– Data and trust: the rules, safeguards, and engineering choices that protect people and institutions.

– Sustainability: how digital systems shape energy use, resource recovery, and climate resilience.

– Conclusion: practical steps for readers, teams, and local leaders to steer technology toward public value.

Three cross-cutting themes will appear in every section. First, equity: progress that bypasses rural areas, older adults, or low-income households is not real progress. Second, resilience: redundancy, open standards, and local skills decide whether systems bend or break under stress. Third, accountability: clear goals, measurement, and consequences keep innovation grounded in outcomes, not slogans. With that compass, let’s move from headlines to the mechanics shaping everyday life.

Connectivity, Access, and the Uneven Map of Opportunity

Connectivity is not a luxury; it is now a prerequisite for education, healthcare access, and economic participation. Still, gaps remain stubborn. Urban cores tend to enjoy fast and affordable service, while rural and low-density regions rely on older copper lines, sparse wireless coverage, or costly satellite links. Affordability is as decisive as coverage: even where signals reach, subscription prices and device costs can put participation out of reach. Studies across multiple regions show that households with limited disposable income frequently choose data-light plans, which constrains schoolwork, job searches, and telehealth use.

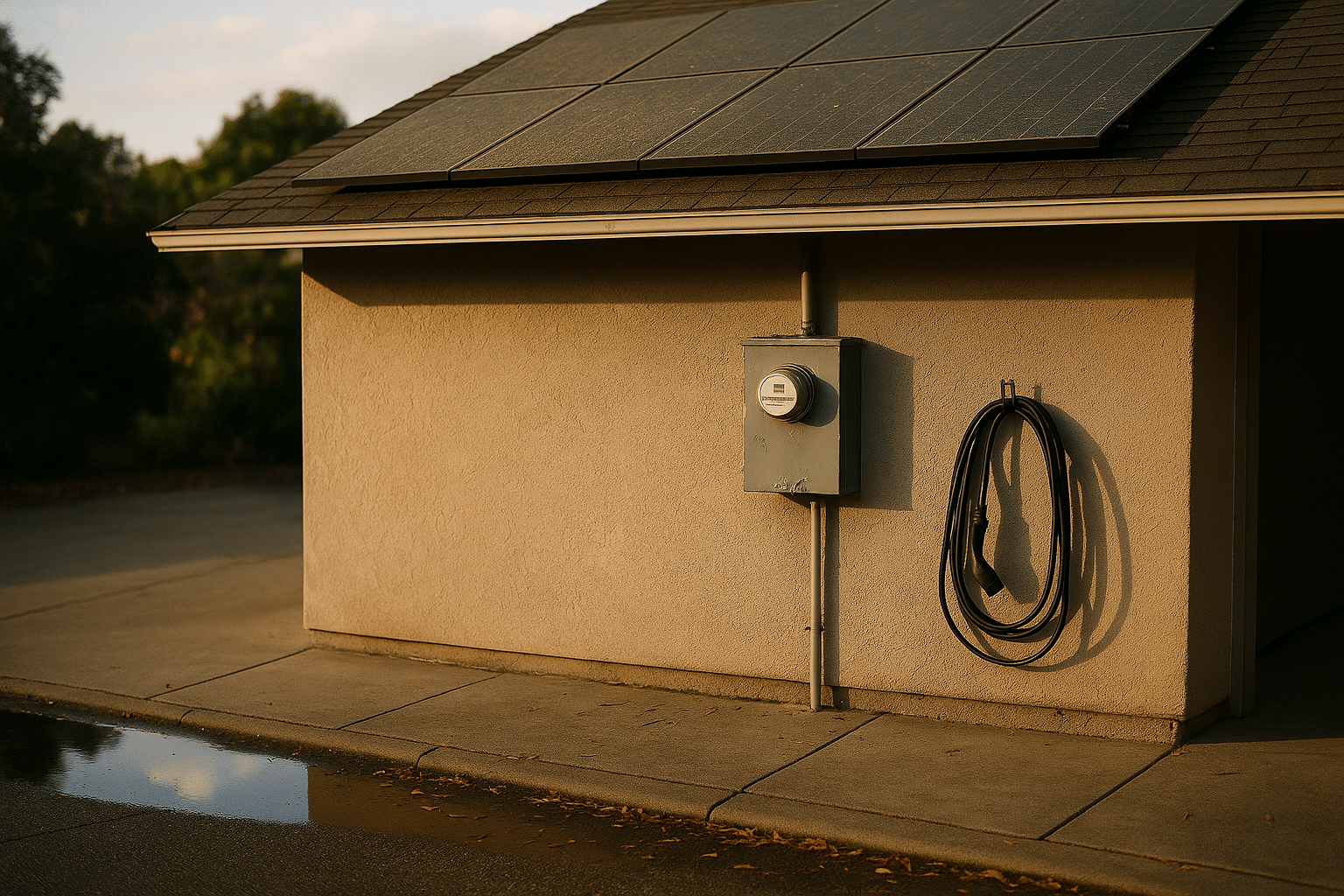

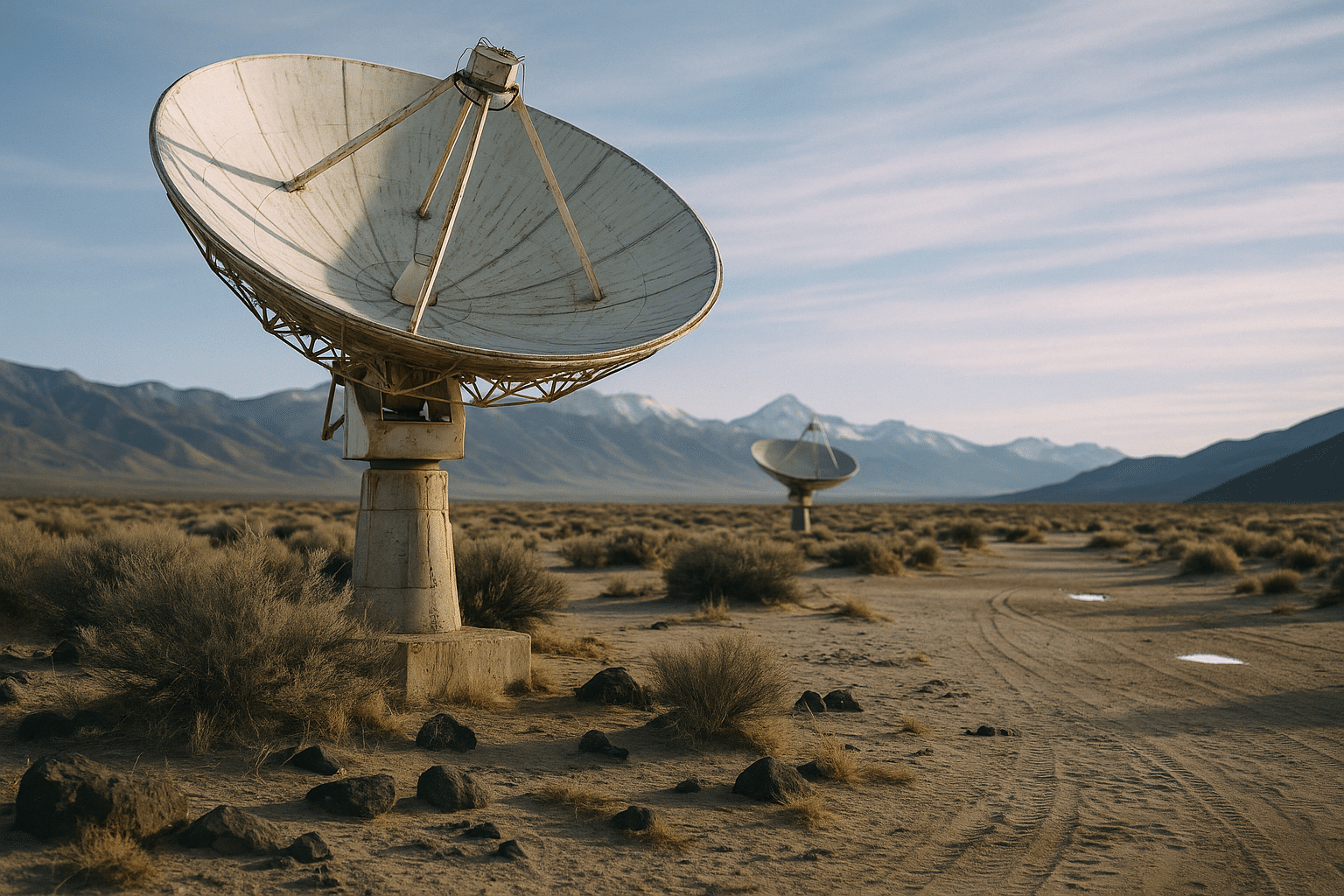

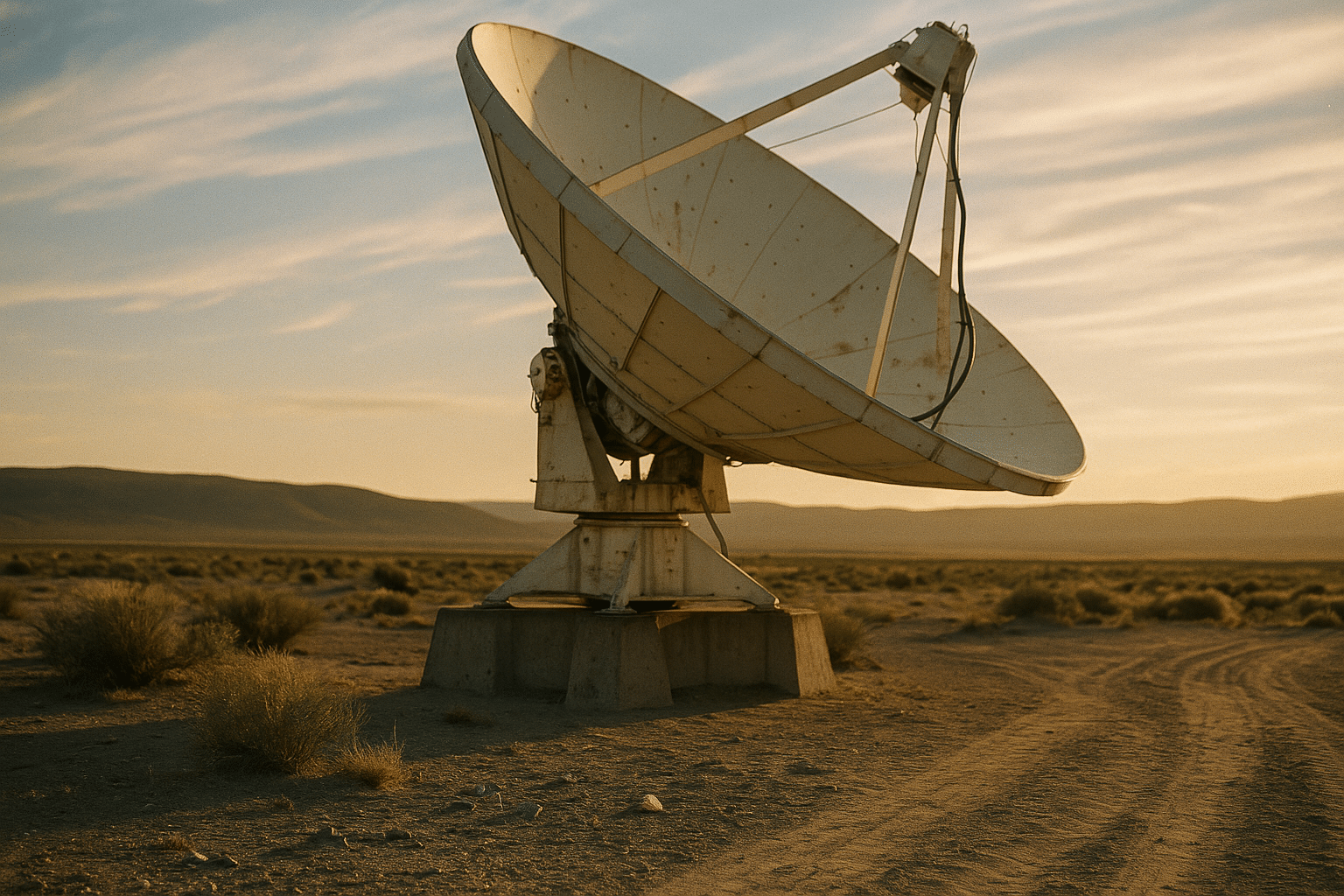

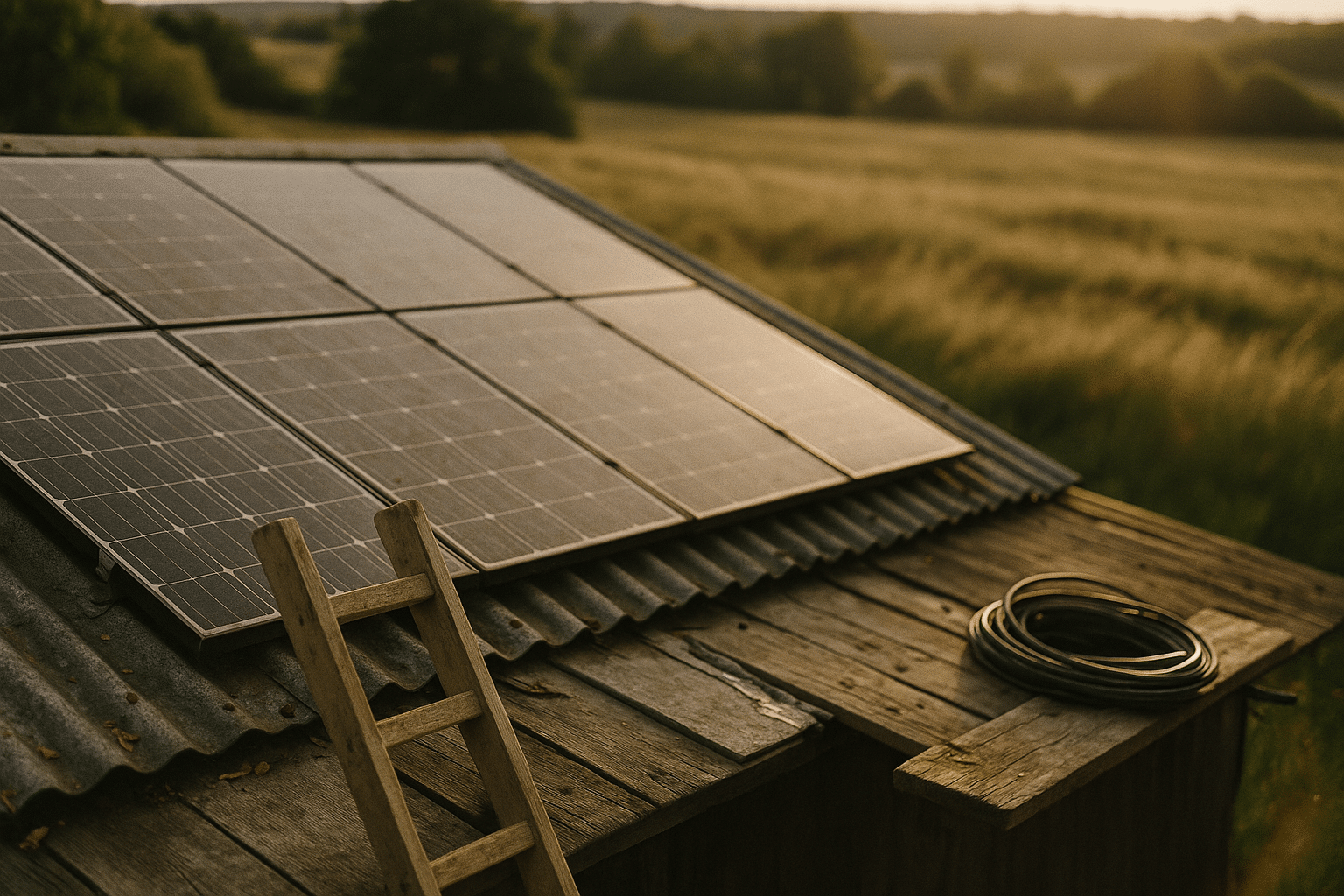

Infrastructure choices matter. Fiber offers exceptional capacity and reliability but demands trenching, rights-of-way coordination, and upfront capital. Fixed wireless can be rolled out rapidly and upgraded via software, yet it depends on clear line-of-sight and well-managed spectrum. Satellite constellations extend reach to remote areas, though equipment costs, weather sensitivity, and congestion policies shape real-world performance. A robust strategy often blends layers: fiber backbones feeding wireless last-mile links, with community-managed access points anchoring neighborhoods and schools.

Inclusion hinges on more than cables. Devices, literacy, and relevant services complete the stack. Shared access hubs in libraries, clinics, and cooperatives remain powerful equalizers, particularly when paired with workshops that demystify privacy settings, job portals, and digital paperwork. Small pilots show that pairing connectivity with mentorship—such as coaching on online safety and basic troubleshooting—yields higher retention and better outcomes than connectivity alone.

Policy and practice levers that repeatedly deliver value include:

– Open-access infrastructure that lets multiple providers compete on the same fiber, nudging down prices.

– Targeted subsidies tied to performance metrics, like latency and uptime, rather than raw coverage claims.

– Device refurbishment programs with quality checks, warranties, and local repair training.

– Community networks governed by transparent rules, where residents help set prices, manage hotspots, and escalate outages.

Measurement keeps everyone honest. Publishing maps of not just coverage but median speeds at peak times helps residents compare options and regulators redirect funds. Likewise, affordability indices that combine plan price, data allowance, and median income make progress—or backsliding—visible. When networks, pricing, devices, and skills move in sync, participation follows; when any link breaks, opportunity narrows. Connectivity policy, in short, is social policy by another name.

Automation, AI, and the Changing Nature of Work

Automation is not a single switch that turns jobs off; it is a reallocation of tasks. Research by labor economists suggests that a minority of roles face high automation risk, while a much larger share will be reshaped as software takes on predictable, rules-based activities. Early field trials of modern language and vision systems in customer support, coding, and document drafting report notable gains in speed for routine segments of work, with mixed results on complex cases that require judgment, negotiation, or cross-domain reasoning.

It helps to separate what machines do reliably from what humans do distinctively. Systems trained on large datasets can generate plausible text, classify images, summarize long documents, and fill in structured forms quickly. They can monitor dashboards, flag anomalies, and draft standard responses. Yet they still falter when requirements are ambiguous, stakes are high, or context is thin. Bias in training data can skew outputs; models can produce confident but incorrect statements; and edge cases remain, by definition, outside learned patterns.

Think in terms of task portfolios rather than job titles. In many offices, an analyst’s week might include cleaning spreadsheets, writing memos, meeting clients, and designing experiments. Automation can streamline the first two, amplify the third through better preparation, and support the fourth with faster iteration—but leadership, ethics, and interpersonal skill still anchor outcomes.

Useful rules of thumb:

– Suitable for automation: transcription, classification, standard template drafting, first-pass data exploration.

– Human-led with AI support: diagnosis with context, creative strategy, conflict resolution, policy interpretation.

– Human-only for now: values trade-offs, accountability decisions, and tasks where harm from error is severe.

What about workers and wages? Historical patterns show that new tools can both displace and create roles, with net effects depending on education, mobility, and complementary investment. Short, stackable training—micro-credentials, peer mentoring, and project-based learning—helps broaden access to the new mix of tasks. For organizations, thoughtful deployment matters as much as model choice: define the goal, pilot on narrow use-cases, measure quality and speed, implement escalation paths for tricky cases, and provide clear documentation. This is less a technology sprint than an operations marathon, paced by trust and results.

Data, Privacy, and Trust in a Sensor-Rich World

Our devices, buildings, vehicles, and streets now emit data continuously. Location pings, usage logs, and sensor readings can inform better services, but they also invite overcollection and misuse. People care not only about whether data is “secure,” but about who sees it, for what purpose, and for how long. Trust, therefore, is both a legal and engineering problem—made real by defaults, dashboards, audits, and deletion buttons that actually work.

Several design principles have emerged as durable guardrails. Data minimization keeps only what is necessary to deliver a service, reducing both risk and storage cost. Purpose limitation prevents silent repurposing of data for unrelated aims. Short retention windows lower the blast radius of a breach. Encryption—at rest and in transit—limits exposure when systems fail, and strong key management practices keep that promise credible. Privacy-preserving analytics techniques, such as aggregation, differential noise, or on-device computation, allow insights without exposing raw records.

Governance, meanwhile, aligns incentives. Clear data maps show what is collected, where it lives, and who has access. Access controls enforce the principle of least privilege, and logs enable after-the-fact accountability. Independent assessments stress-test policies as well as code. For international operations, cross-border rules and contractual clauses shape where data can travel and under what conditions. Organizations that publish plain-language summaries of their practices, backed by metrics—response times to user requests, number of access exceptions, audit findings—earn more trust than those that rely on slogans.

Practical steps any team can adopt quickly:

– Default to opt-in for sensitive features and make choices reversible with a single click.

– Replace perpetual identifiers with rotating tokens to reduce linkage across contexts.

– Set deletion timers by data type, with monthly reports on what was actually purged.

– Offer user-facing transparency pages that show categories collected, reasons, and retention periods.

Good privacy practice is not only defensive. It can sharpen product design by forcing clarity about what truly matters to users. It can accelerate partnerships by simplifying due diligence. And it reduces regulatory surprises by baking compliance into architecture. In a world where data is both tool and liability, sober governance is the price of admission to durable innovation.

Conclusion: A Human-Centered Tech Compact for Readers and Leaders

Technology’s arc is shaped less by breakthroughs than by choices. Networks can widen opportunity or entrench gaps depending on how they are financed, taught, and maintained. Automation can become a partner that removes drudgery or a blunt instrument that erodes dignity, contingent on how tasks are redesigned and how workers share in gains. Data can empower or expose, guided by defaults, transparency, and the will to delete. Climate-focused tools can reduce emissions while introducing new material footprints that require careful accounting and recovery.

For individuals and families:

– Favor durable, repairable devices and use power-saving modes to cut energy and cost.

– Learn a small set of digital hygiene habits: strong passphrases, updates, and privacy checks.

– Treat AI outputs as drafts, not decisions, and keep notes on when you overrode a suggestion.

For educators and workforce programs:

– Map curricula to real task changes in local industries, with short, stackable modules.

– Blend technical skills with communication, ethics, and data literacy to future-proof learners.

– Create capstone projects with community partners to turn learning into visible impact.

For organizations and local governments:

– Define problems before procuring tools; measure success with user-centered metrics.

– Publish service dashboards and outage reports to align expectations and encourage fixes.

– Invest in open standards and interoperability so communities are not locked into brittle systems.

The thread connecting these actions is agency. We do not have to accept a future that merely happens to us. By asking clear questions—Who benefits? Who is at risk? How will we know it works?—and by pairing those questions with steady, evidence-based practice, society can turn diffuse technological change into concrete public value. The goal is not speed for its own sake, but momentum with direction, leaving people more capable, more connected, and more in control of the systems that now shape daily life.