Exploring Science: Latest discoveries and advancements in Science.

Outline

– The Computing Frontier: AI, accelerated hardware, and the push for efficient intelligence

– Biotechnology and Health Tech: precision tools, data-rich medicine, and ethical safeguards

– Energy and Climate Tech: storage, grids, and breakthroughs that cut emissions

– Space and Earth Observation: sensing, navigation, and resilient exploration systems

– Materials, Quantum, and Manufacturing: novel matter, secure computing, and agile production

Introduction: Why New Science and Technology Matter Right Now

Innovation is not a distant fireworks show; it is a steady sunrise that changes how we see the landscape. The latest discoveries are quietly altering the economics of computing, the accuracy of medicine, the reliability of power, the clarity of Earth observation, and the agility of manufacturing. For individuals, this translates to tools that save time and widen opportunity. For organizations, it becomes a question of where to invest, how to manage risk, and when to transition from pilot projects to scaled deployment.

Across disciplines, several patterns recur. First, capability growth is colliding with energy and data constraints, making efficiency the currency of progress. Second, open research and shared benchmarks are accelerating iteration while raising new questions about security, privacy, and fairness. Third, the journey from lab to market remains uneven: some fields leapfrog with modular designs and software-defined control, while others demand years of validation and standards work. Understanding these dynamics helps leaders avoid hype traps and anchor plans in evidence.

This article follows a simple arc: a map of the frontier, then a grounded look at five domains where change is tangible and testable. Expect pragmatic comparisons, trade-offs that matter in budgets and timelines, and examples that illustrate both promise and limits. The goal is clarity—enough detail to act, without drowning in jargon. If science is the engine, technology is the gearbox; shifting smoothly between them is the skill worth practicing.

The Computing Frontier: Efficient AI, Scalable Models, and Responsible Deployment

Artificial intelligence has moved from bespoke prototypes to broadly useful systems that understand language, images, and sensor streams with increasing fluency. Model scales have expanded by orders of magnitude, but raw size is no longer the only story. Efficiency is advancing through techniques like sparsity, low-precision arithmetic, and distillation, enabling capable models to run on modest devices at the network edge. This shift reduces latency, preserves privacy by keeping data local, and lowers energy costs—often the hidden line item in AI budgets.

Comparing deployment patterns reveals a pragmatic split. Centralized inference offers strong performance for complex tasks and global updates, but it depends on stable connectivity and concentrated compute. Edge inference trades peak accuracy for speed and resilience, which matters in contexts like industrial inspection, field maintenance, and assistive tools. A hybrid approach is emerging: lightweight models provide instant responses, while periodic syncs with larger back-end models deliver refinements. This mirrors content-delivery strategies that brought streaming to scale—push what is common, pull what is rare.

Quality hinges on data curation and evaluation, not merely on parameter counts. Modern benchmarks incorporate adversarial cases, demographic balance checks, and domain-specific scoring to gauge robustness. Teams are adopting model cards and structured risk logs to document limitations—a cultural shift toward accountable engineering. It is also increasingly common to chain specialized models: e.g., a perception model flags anomalies, a retrieval system fetches domain references, and a policy model enforces guardrails. Such modularity simplifies updates and improves traceability.

Operational realities demand attention. Energy usage for large training runs can reach megawatt-hour scales, so scheduling around cleaner grid windows and reusing pre-trained backbones provides tangible savings. Tooling now automates cost-aware experiments, early stopping, and dataset deduplication. Security is part of the fundamentals: red-teaming uncovers prompt injection, data exfiltration, and jailbreak attempts; rate limiting and content filters reduce abuse; and logging facilitates incident response. Practical tips for teams include:

– Start with clear task definitions and refusal rules to cut post-hoc patching.

– Prefer compact architectures fine-tuned on high-quality, de-duplicated data.

– Measure latency, throughput, and failure modes alongside accuracy to prevent regressions.

– Pilot at the edge where bandwidth is scarce; centralize only what must be shared.

Together, these practices turn impressive demos into dependable systems that serve users rather than surprise them.

Biotechnology and Health Tech: Precision Tools, Real-World Data, and Guardrails

Biotechnology is translating molecular insight into practical care, compressing timelines from discovery to intervention. Gene-editing platforms have graduated from proof-of-concept to targeted applications in blood disorders and rare diseases, with delivery systems improving tissue specificity. Meanwhile, the cost and speed of DNA sequencing have fallen by several orders of magnitude since the early 2000s, enabling population-scale studies that link genetic variation to health outcomes. These datasets, when integrated with clinical records and wearable signals, support predictive models that can flag risk earlier than conventional screening.

Comparisons across modalities clarify strengths and limits. Small molecules remain versatile and cost-effective for many conditions, biologics offer potent specificity at higher manufacturing complexity, and cell-based therapies promise personalization but face logistics and durability challenges. Diagnostics follow similar trade-offs: lab-based assays achieve high sensitivity but require infrastructure; point-of-care tests prioritize speed and access; continuous monitors deliver trendlines that guide behavior but may drift and demand calibration. In practice, blended pathways work well: an at-home test triggers a confirmatory lab assay; a wearable trend prompts a telehealth consult; a genomic finding informs dosage adjustments.

Real-world evidence is redefining validation. Instead of relying solely on narrow clinical cohorts, researchers now combine registry data, consented device streams, and pragmatic trials to assess effectiveness in diverse populations. This broadens inclusion while revealing context-dependent effects—how climate, diet, and adherence shape outcomes. However, more data is not a cure-all; bias can creep in through missingness, device access, or labeling choices. Mitigation steps include:

– Pre-registering endpoints and publishing protocols to reduce selective reporting.

– Auditing datasets for representation and measurement error.

– Stress-testing models across subgroups and environments before deployment.

– Enforcing clear off-ramps when confidence is low, with human oversight by default.

Ethics and privacy are integral design constraints, not afterthoughts. De-identification techniques, on-device processing, and federated learning reduce exposure of sensitive records. Consent flows are becoming more granular, letting participants opt into specific uses while receiving feedback about findings that matter to them. Regulation continues to evolve toward risk-based pathways that match oversight to potential harm. The overall direction is hopeful yet disciplined: rapid iteration in the lab, deliberate steps to the clinic, and continual monitoring once tools meet real patients.

Energy and Climate Tech: Storage, Grids, and Pathways to Lower Emissions

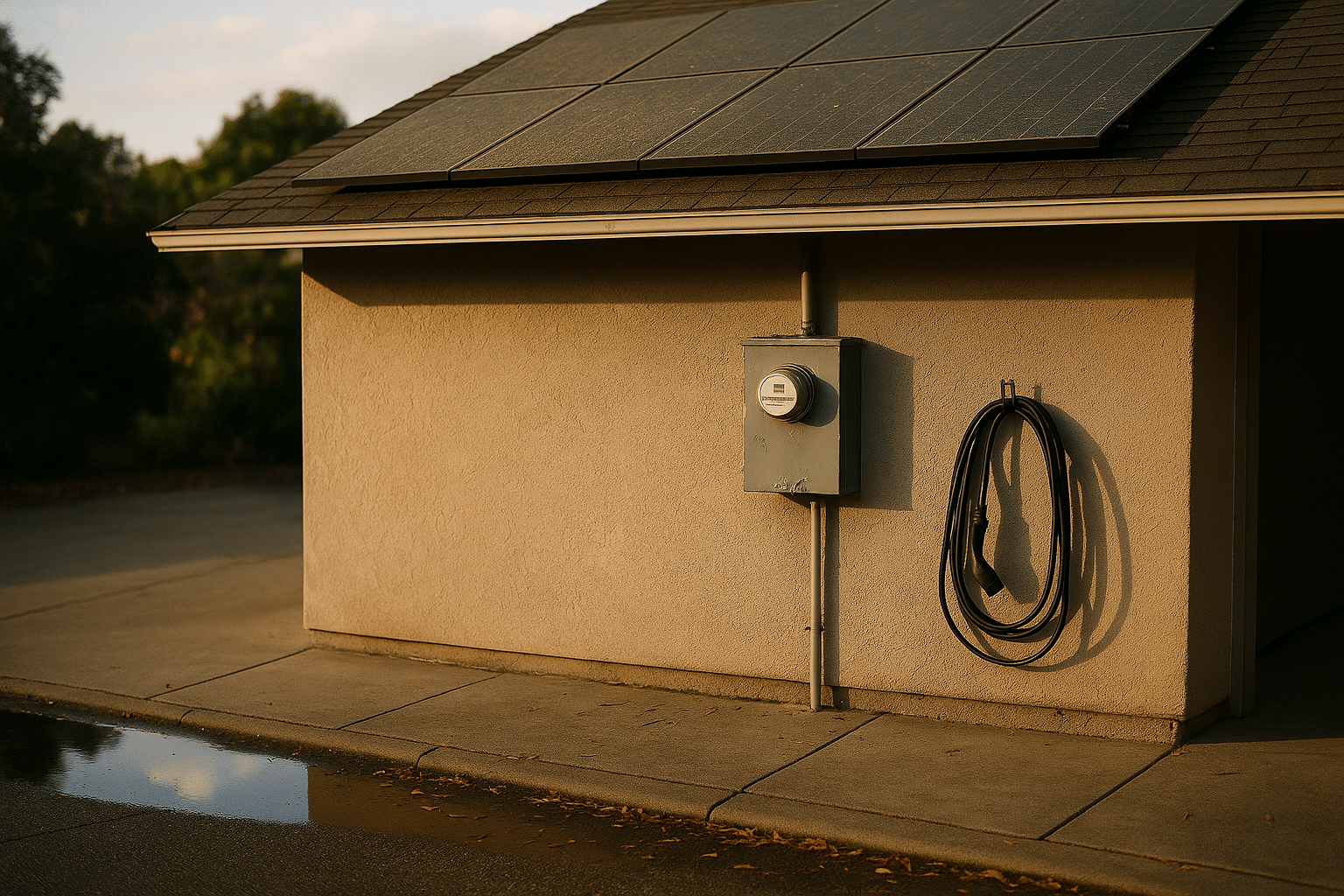

The energy transition is no longer theory; capacity additions for wind and solar have surged, and battery storage is maturing from pilot projects to multi-hour assets that stabilize grids. Over roughly a decade, average battery pack prices fell dramatically—from four-digit costs per kilowatt-hour to low hundreds—unlocking new economics for mobility and stationary storage. While price curves are uneven year to year, the direction is clear: manufacturing scale, materials innovation, and streamlined balance-of-system designs continue to reduce total installed costs.

Comparing flexibility options helps planners avoid bottlenecks. Batteries excel at fast response and short to medium durations; pumped hydro provides bulk storage where geography allows; demand response shifts consumption with smart controls; thermal storage captures heat for industrial and district uses. When combined with more granular forecasting, these resources tame variability without overbuilding. Grid modernization completes the picture: advanced inverters support voltage control, dynamic line ratings squeeze more capacity from existing corridors, and standardized data interfaces enable coordination across utilities, aggregators, and customers.

Breakthroughs are percolating. High-temperature superconducting cables promise compact corridors in dense cities. Next-generation photovoltaics aim to lift efficiency with tandem architectures, and solid-state batteries target higher energy density with improved safety profiles. On the horizon, fusion research has reported laboratory shots with favorable energy balance, though stable and economical operation remains a multi-year engineering problem involving materials resilience, heat management, and maintenance automation. Carbon removal technologies—from reforestation to mineralization and air-capture—are diversifying, but scale, permanence, and monitoring still define credibility.

Decision-makers weigh trade-offs across time, land use, and reliability. A practical planning checklist might include:

– Map hourly demand and resource profiles to select complementary assets.

– Price flexibility (ramping, reserves) separately from energy to reveal value.

– Track lifecycle emissions and supply-chain risks, not only upfront costs.

– Pilot local microgrids for resilience in outage-prone regions.

– Invest in workforce training to accelerate safe interconnection and maintenance.

With these steps, communities can pursue cleaner energy while respecting budgets and ensuring dependable service.

Space and Earth Observation: Sensing a Dynamic Planet and Building for Resilience

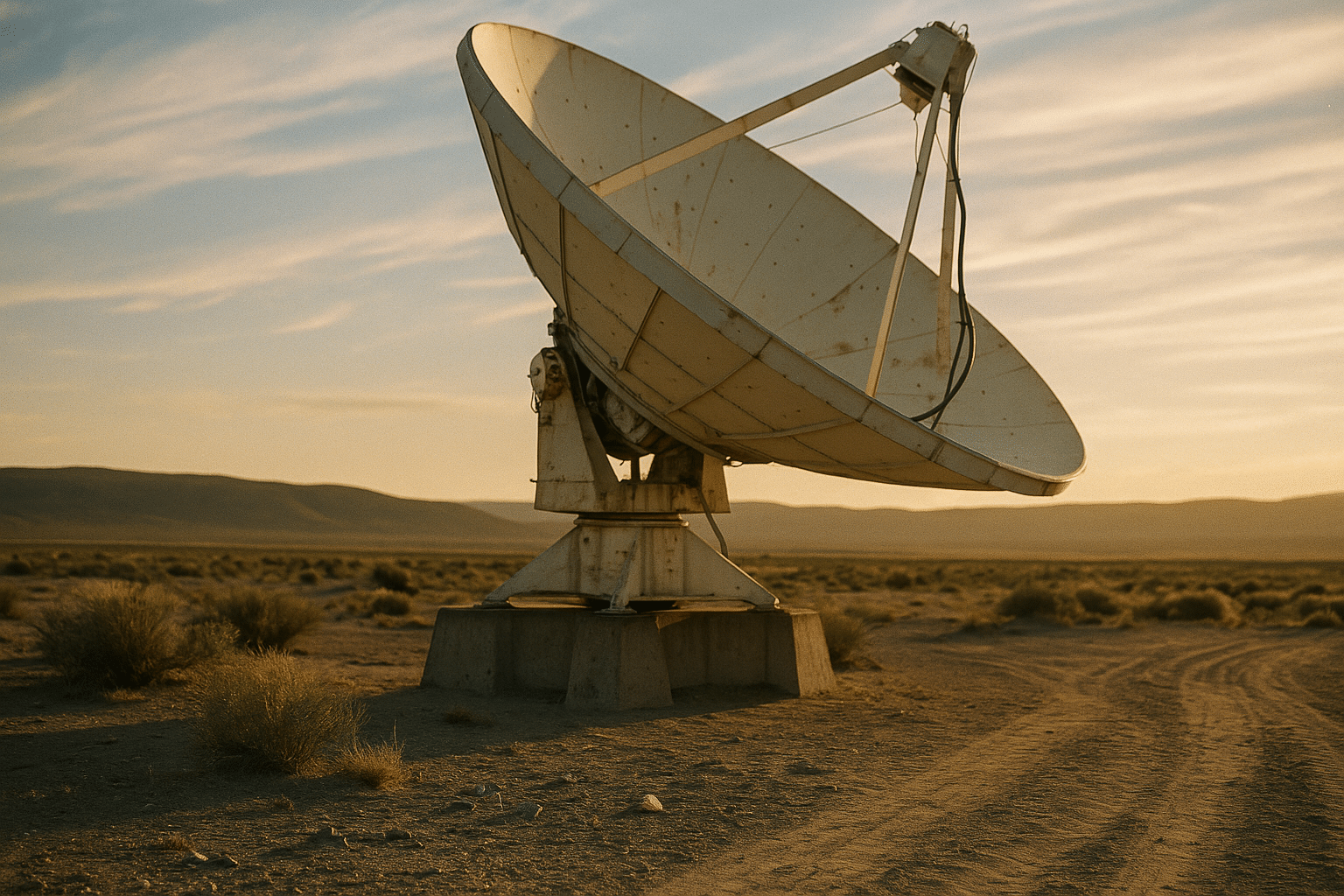

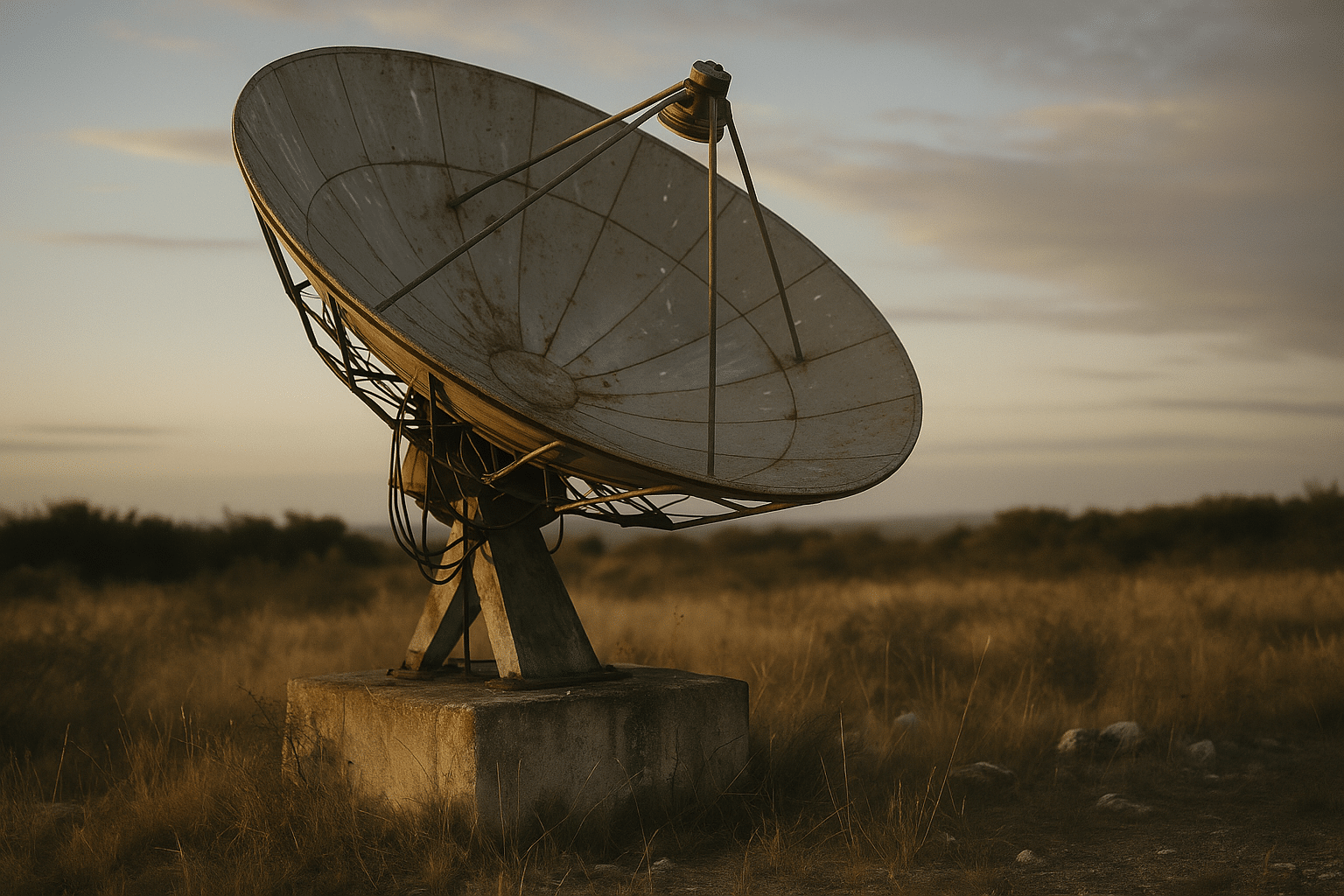

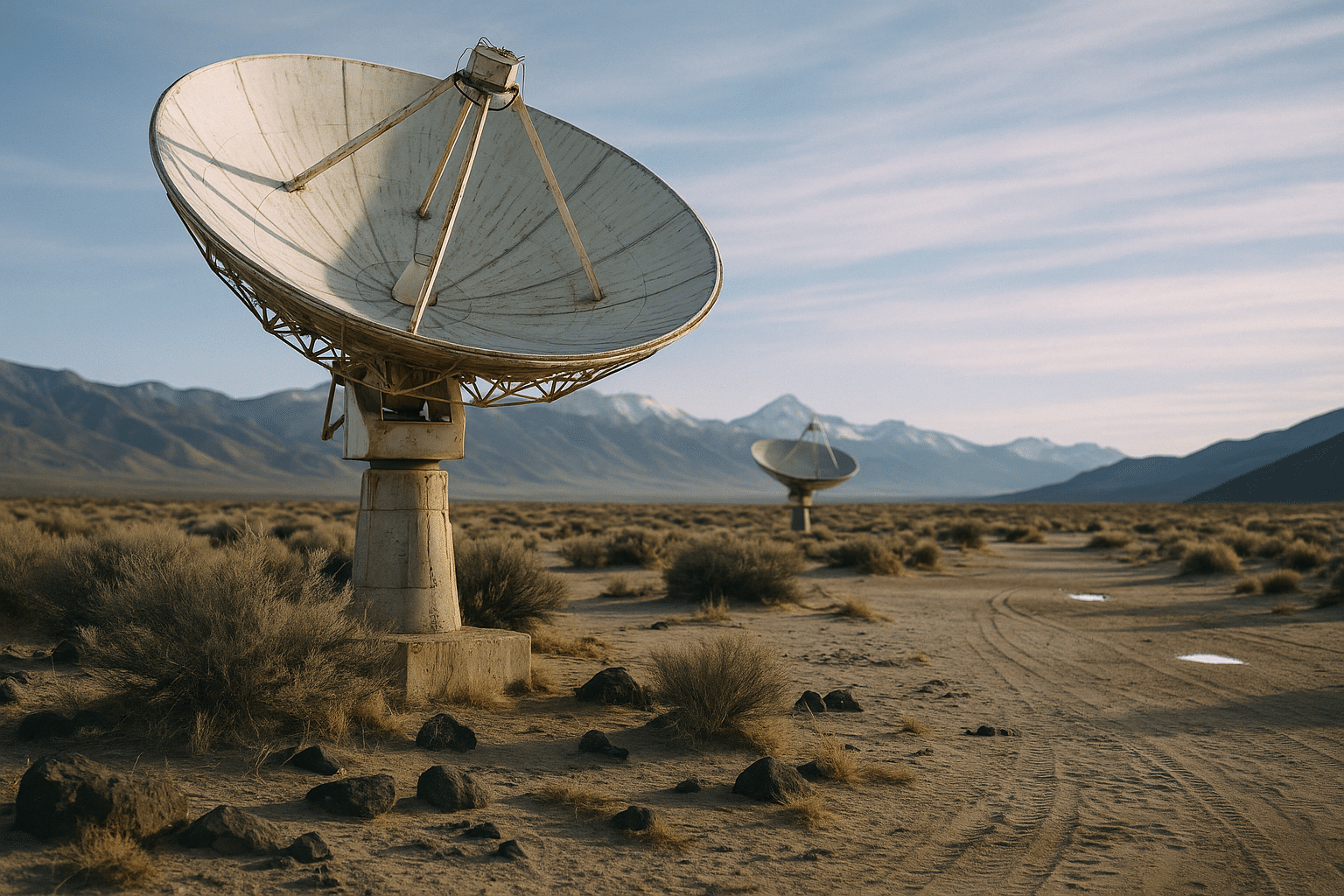

Space technology is increasingly an Earth technology. Constellations of small satellites now offer frequent revisits, producing imagery and measurements that help farmers tune irrigation, insurers assess risk, and emergency teams coordinate during fires and floods. Radar sensors peer through clouds and darkness, while multispectral and thermal bands reveal vegetation stress, heat islands, and infrastructure anomalies. These streams pair with ground networks to produce near-real-time situational awareness that was once the domain of rare, bespoke missions.

Launch and spacecraft design have changed the cost calculus. Reusable stages have lowered average costs per kilogram and encouraged a faster cadence of incremental upgrades. Standardized satellite buses, modular instruments, and software-defined radios shorten development cycles from years to months. However, low-cost access must be balanced with stewardship: space traffic management, debris mitigation, and spectrum coordination are essential to avoid congestion. Techniques such as autonomous collision avoidance, controlled deorbiting, and reflective coatings to reduce sky brightness are becoming standard practice.

For Earth science, combining modalities outperforms any single sensor. Optical images inform land use; radar quantifies ground motion and flood extent; radio occultation refines weather models; GNSS reflectometry hints at soil moisture and ocean roughness. Fusion pipelines register and filter these inputs, flagging outliers and quantifying uncertainty. On the ground, edge processors in remote stations pre-screen data to conserve bandwidth, while cloud archives preserve long time-series that support climate baselines and policy planning. This layering enables:

– Faster disaster mapping that directs responders to the highest-need zones.

– Agricultural advisories that optimize inputs and reduce runoff.

– Infrastructure monitoring that detects subtle shifts before failure.

– Conservation programs that verify outcomes with transparent metrics.

Exploration beyond Earth continues to feed back improvements. Autonomy honed for navigation becomes useful for robotics in mines and warehouses. Radiation-hardened components inspire robust electronics for harsh industrial sites. And planetary protection protocols remind engineers how to design for contamination control—wisdom that translates neatly to clean manufacturing and advanced labs. The guiding principle is reciprocity: space advances sharpen our view of Earth, and earthly needs shape the next generation of spacecraft.

Materials, Quantum, and Manufacturing: From Novel Matter to Agile Production

Materials science is widening the palette for engineers. Two-dimensional materials, complex alloys, and metamaterials deliver properties—conductivity, strength-to-weight, tunable optics—that conventional options struggle to match. In energy, perovskite-based photovoltaics have recorded lab efficiencies above a quarter under standard test conditions, spurring efforts to stabilize modules against moisture and heat. Solid-state battery chemistries promise higher energy density and reduced flammability by replacing liquid electrolytes with ceramic or polymer alternatives, though interface resistance and manufacturing yield remain active challenges.

Quantum technologies are entering a measured adolescence. Quantum key distribution and post-quantum cryptography address security from opposite directions: one through physics-based links, the other through classical algorithms designed to resist quantum attacks. On the computing side, prototype processors are growing in qubit count and coherence, while error-correction schemes demonstrate logical operations at improving fidelities. Near-term use cases emphasize simulation, optimization, and sensing, where even modest quantum advantages could complement classical accelerators. Realistically, hybrid workflows will dominate: classical solvers narrow the search space; quantum routines explore structured subproblems; classical post-processing refines results.

Manufacturing is becoming more software-defined. Additive techniques create lattice structures with high stiffness and low mass, reduce tooling lead times, and enable rapid iteration. Coupled with topology optimization and digital twins, factories can validate designs virtually before committing material. Machine vision and tactile sensing improve quality control, while collaborative robots handle repetitive tasks and ergonomically risky motions. Supply chains, still sensitive to shocks, are experimenting with regionalized production, common part libraries, and predictive inventory informed by real-time demand signals.

A practical lens for adoption weighs maturity, integration cost, and regulation. For example:

– Novel materials: pilot components in low-risk environments, gather aging data, and plan recycling paths early.

– Quantum tools: start with simulation sandboxes, track algorithmic benchmarks, and adopt crypto agility to prepare for standard updates.

– Additive manufacturing: qualify processes for critical parts, monitor porosity and surface finish, and blend printed and machined steps to balance speed and precision.

By aligning research sprints with production rhythms, organizations turn breakthroughs into durable advantages rather than isolated experiments.

Conclusion: Turning Discovery into Decisions

The throughline across these domains is disciplined ambition: aim high, measure honestly, and build for reliability. For researchers, that means sharing artifacts and uncertainty estimates that others can test. For product teams, it means scoping problems narrowly, choosing efficient architectures, and documenting failure modes before scaling. For educators and policymakers, it means updating curricula and incentives so that safety, privacy, and equity travel with performance. Science supplies the spark; technology turns it into light you can work by. Choose pilots that matter, instrument them well, and let evidence guide the next step.