Exploring Science: Latest discoveries and advancements in Science.

Outline:

– Smarter intelligence at the edge of the network

– Quantum leaps and the rise of novel materials

– Bioengineering and precision health technologies

– Clean energy systems accelerating the transition

– Space and Earth observation redefining our view

Introduction

Science and technology move in tandem, nudging society forward through careful experiments, iterative engineering, and measured leaps. The latest wave of progress arrives not as a single headline, but as a mosaic of advances that compound: smaller and more efficient processors, new quantum-era techniques, reprogrammable biology, cleaner energy systems, and a sharper lens on our planet. This article connects those pieces, explains how they fit, and highlights where evidence shows momentum building.

Intelligence at the Edge: Faster Decisions, Leaner Models, Safer Data

Artificial intelligence once lived almost entirely in distant data centers. Today, it increasingly runs on devices closer to where data is generated—sensors, vehicles, medical wearables, and factory controllers. Shifting models toward the edge trims round‑trip latency from hundreds of milliseconds to just a handful in local scenarios, which matters for safety, responsiveness, and battery life. It also keeps sensitive information on device, reducing exposure risks and easing compliance by processing raw inputs locally and sharing only compressed insights.

Compared with a cloud‑only approach, edge deployments trade raw horsepower for speed and privacy. Compact neural networks distilled from larger models can deliver near‑parity accuracy on targeted tasks while consuming a fraction of energy—tens of millijoules per inference versus orders of magnitude more when streaming data over wireless links. Hardware trends reinforce this shift: specialized accelerators and memory‑centric designs reduce data movement, which often dominates power draw. In practice, a well‑tuned edge pipeline can cut bandwidth needs by over 90% by transmitting events rather than continuous feeds.

Pragmatic design patterns are emerging:

– Federated learning: trains models across many devices without centralizing raw data, sharing only gradients or updates.

– TinyML workflows: compress, quantize, and prune models so they fit into kilobytes to low megabytes of memory.

– Hybrid inference: runs lightweight screening locally and reserves complex cases for a cloud endpoint.

Not every task suits the edge. Large‑scale analytics, cross‑domain search, and training new foundation models still favor centralized resources. The art is in partitioning: send only what must be shared, compute what can be decided locally, and validate outcomes against known baselines. Clear metrics—latency targets, energy per inference, on‑device accuracy, and network cost—prevent wishful thinking and anchor roadmaps in measurable outcomes.

Quantum Steps and Novel Materials: Useful Gains Before Full Fault Tolerance

Headlines often frame quantum computing as an all‑or‑nothing prospect, but the reality is more incremental and, importantly, already useful. While fully error‑corrected machines remain a long‑term goal with significant overhead, near‑term devices and algorithms are helping researchers simulate small molecules, probe reaction pathways, and benchmark optimization methods. Even when classical approaches remain competitive, cross‑verification tightens confidence in results and guides where quantum resources can add value first.

Progress is not only about qubits; materials science underpins stability and scalability. Low‑defect substrates, high‑purity superconductors, and carefully engineered interfaces are extending coherence from microseconds toward milliseconds in controlled settings. Meanwhile, two‑dimensional materials and layered heterostructures offer tunable electronic properties, enabling sensors with single‑photon sensitivity or room‑temperature magnetometers that can map faint fields. These components serve broader science too, improving imaging, timing, and low‑noise measurement beyond computing alone.

Key comparisons clarify the landscape:

– Error mitigation vs. full correction: mitigation reduces noise in the short term; correction targets long‑term scalability but at heavy resource cost.

– Analog simulation vs. digital gates: analog can capture specific physics efficiently; digital brings generality with stricter requirements.

– Hybrid workflows: classical optimizers guide quantum routines, balancing cost and accuracy.

Measured expectations help. Benchmarks that report end‑to‑end fidelity, wall‑clock runtime, and uncertainty bounds communicate practical capability better than isolated component metrics. On the materials side, durability under cycling, tolerance to manufacturing variation, and thermal stability often determine whether a laboratory prototype can thrive outside controlled environments. As fabrication refines and algorithms mature, early quantum‑enhanced tools and novel sensors will complement, not replace, trusted classical techniques—expanding the scientific toolbox rather than discarding it.

Bioengineering and Precision Health: Data‑Rich Medicine Without the Hype

Biology’s shift from observation to engineering is accelerating. Gene‑editing techniques now allow single‑letter changes and targeted rewrites, widening the therapeutic window for conditions with clear genetic drivers. Parallel advances in delivery—lipid carriers, viral vectors tuned for specific tissues, and implantable reservoirs—improve targeting and dosing. Meanwhile, sequencing has plunged in cost, dropping from millions of dollars two decades ago to well under a thousand today, enabling clinicians to link interventions with molecular evidence instead of intuition alone.

Wearables and at‑home diagnostics turn episodic care into continuous monitoring. Heart rhythm variability, glucose trends, and sleep stages can be tracked at minute‑level resolution, flagging anomalies earlier and informing therapy adjustments. Compared to intermittent lab visits, longitudinal streams reveal patterns that one‑off measurements miss. Privacy remains central: extracting insights on device, sharing only anonymized features, and allowing patients to control data lifecycles are design choices that build trust and reduce risk.

Useful distinctions keep projects grounded:

– Ex vivo vs. in vivo editing: outside‑the‑body workflows allow quality checks before reinfusion; in‑body approaches aim for broader reach with tougher delivery challenges.

– Therapeutic vs. diagnostic AI: decision support requires transparent reasoning and calibration, not only accuracy.

– Acute vs. preventive value: small, consistent benefits at population scale can rival dramatic results in narrow groups.

Regulatory science is becoming more adaptive, with rolling reviews and real‑world evidence augmenting trial data. Still, durable progress depends on basics: control groups, preregistered endpoints, and clear adverse‑event reporting. Laboratories and clinics that treat models as living documents—updated as new data arrives—avoid brittle protocols. The near future looks less like miracle cures and more like steady gains: earlier detection, fewer side effects, and therapies matched to the molecular story of each condition.

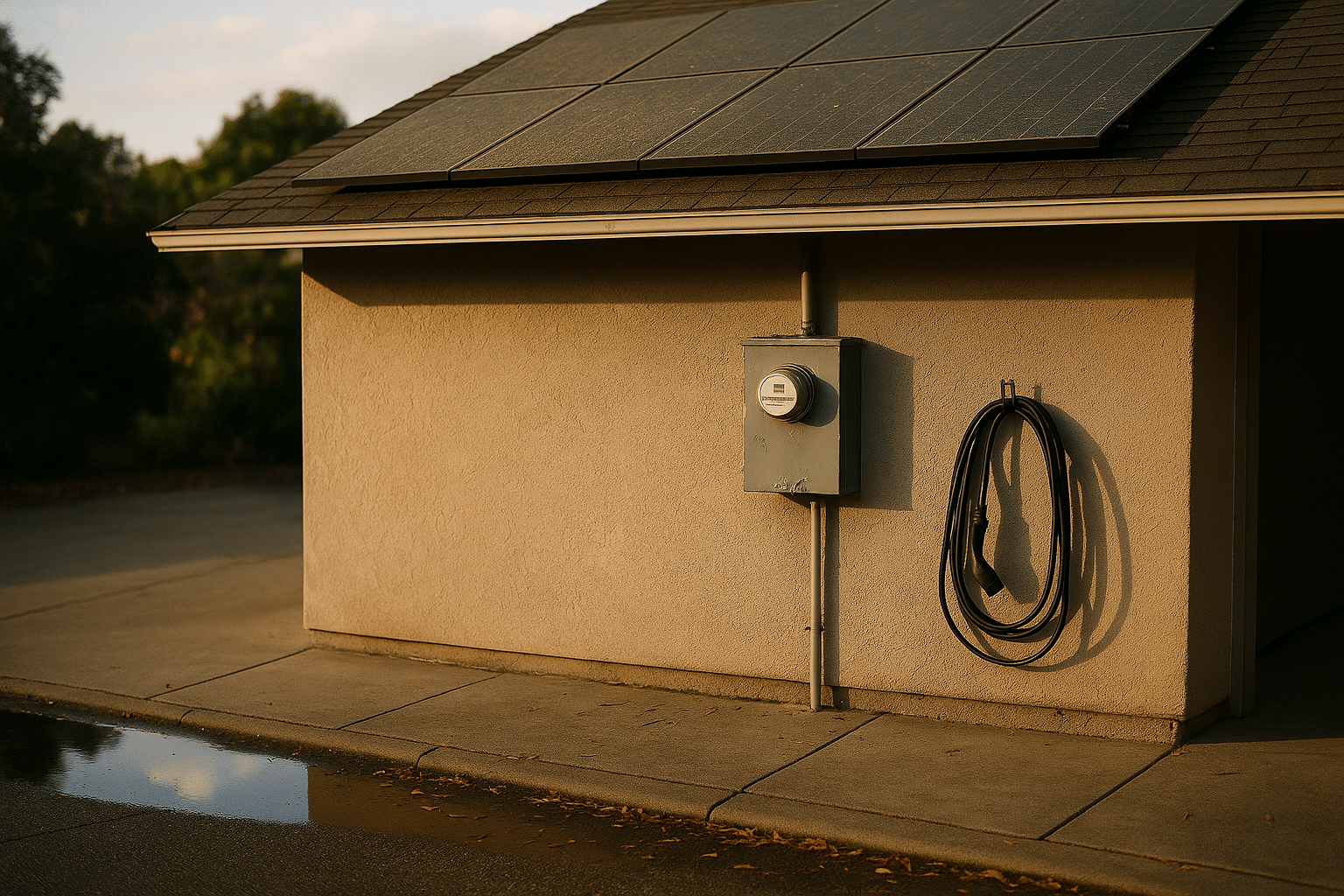

Clean Energy Systems: Converting Progress Into Reliable Power

Energy technologies are transforming from promising prototypes into dependable infrastructure. Over the past decade, the levelized cost of solar and wind fell dramatically as manufacturing scaled and designs matured. Laboratory records for tandem solar cells have edged past thirty percent conversion under standard test conditions, while fielded modules prioritize reliability, temperature tolerance, and stable output over long horizons. Heat pumps deliver multiple units of heat per unit of electricity in moderate climates, trimming emissions from buildings with practical payback timelines.

Storage ties the system together. Grid‑scale batteries now offer multi‑hour discharge windows suitable for evening peaks, and long‑duration options—thermal storage, compressed gases, and flow chemistries—target multiday coverage. Each brings trade‑offs in energy density, cycle life, safety, and cost. For example, iron‑based chemistries trade compactness for benign materials and robust lifetimes, while higher‑energy cells deliver dense storage with stricter thermal management. Paired with smart inverters and advanced forecasting, operators can shave peaks, smooth variability, and deliver synthetic inertia for grid stability.

Design choices benefit from side‑by‑side comparisons:

– Centralized plants vs. distributed rooftops: central sites simplify maintenance; distributed fleets reduce transmission losses and add resilience.

– Curtailment management: flexible loads (electrolyzers, industrial heat) soak up surplus generation instead of wasting it.

– Retrofitting vs. new builds: upgrades unlock quick wins; new designs optimize from the ground up.

Evidence‑based planning relies on transparent data: capacity factors over seasons, round‑trip efficiencies for storage, outage statistics, and lifecycle emissions including supply chains. Electrification of transport and industry will raise demand, making efficiency just as important as generation. The transition succeeds not through a single breakthrough, but through compatibility—interoperable standards, modular upgrades, and grid services that reward flexibility. Like good orchestration, the music comes from many instruments playing on time.

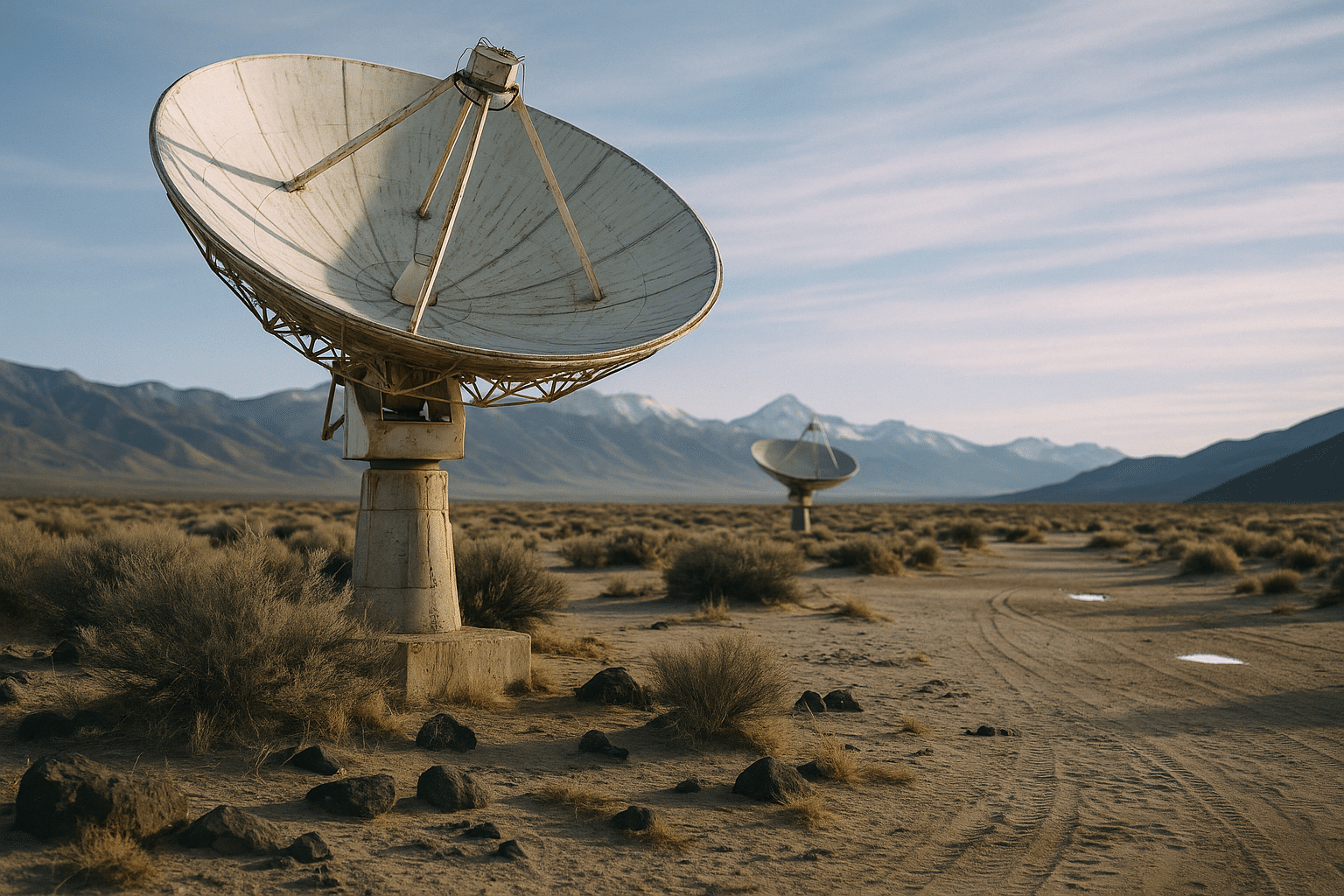

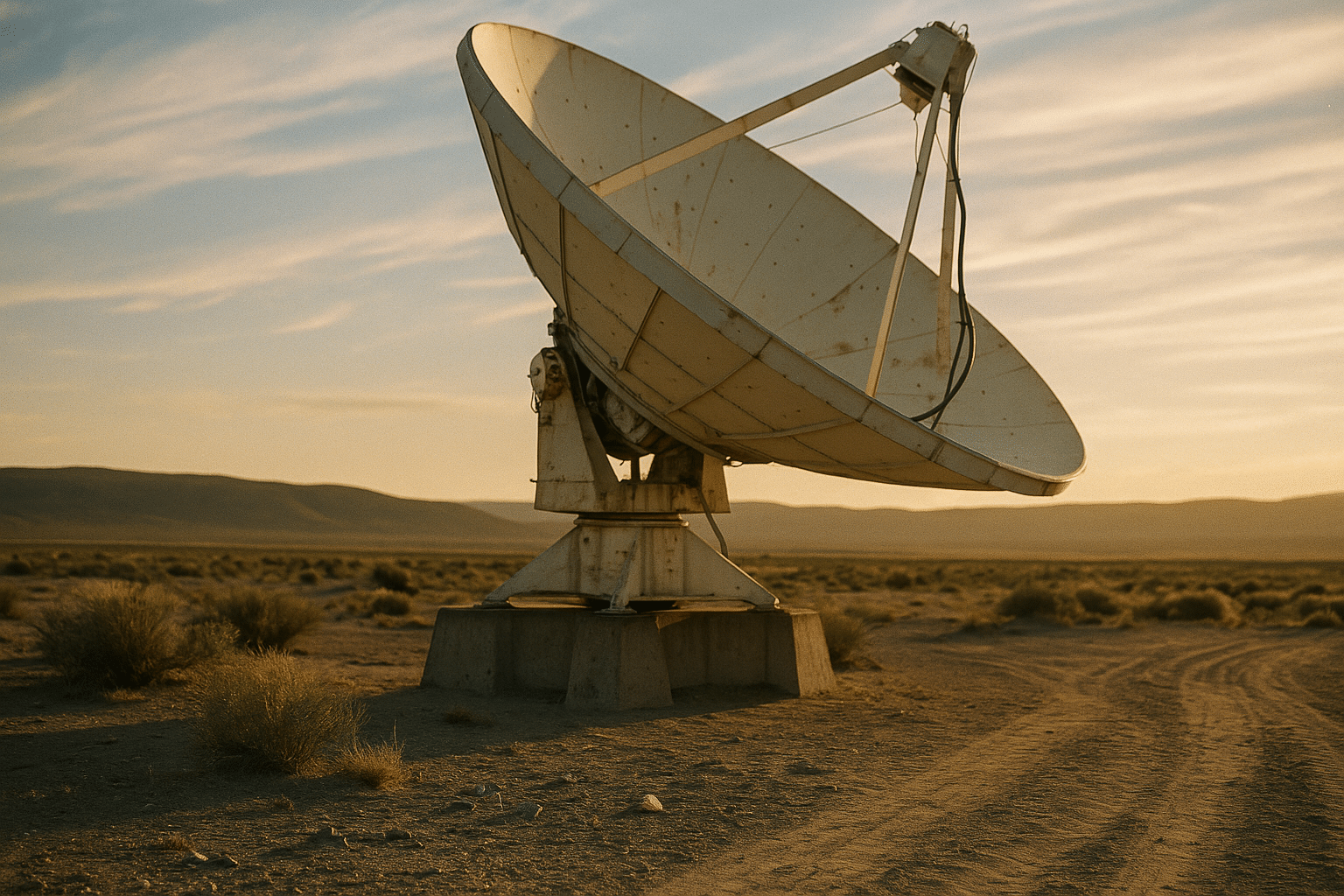

Space and Earth Observation: Sharper Eyes, Faster Revisit, Deeper Context

New generations of satellites and airborne sensors are turning the planet into a high‑resolution dataset. Compact platforms equipped with multispectral, thermal, and radar instruments offer revisit times measured in hours rather than weeks, enabling timely insights on crops, wildfires, shipping lanes, and urban growth. Synthetic‑aperture radar peers through clouds and darkness, complementing optical imagers that excel in clear daylight. When fused, these streams produce robust signals that stand up to weather, seasonal shifts, and sensor noise.

Downlink and processing pipelines have matured. Onboard preprocessing compresses raw captures into features, slashing bandwidth needs, while ground stations leverage standardized formats to stitch scenes quickly. Cloudless coverage is improved through constellations with staggered orbits, and tasking algorithms prioritize scenes with the highest expected value—such as areas with rapid change or known hazards. Compared with legacy systems, today’s architectures trade single‑platform complexity for distributed redundancy and smarter scheduling.

Applications are concrete:

– Agriculture: vegetation indices and thermal gradients guide irrigation and harvest timing.

– Disaster response: rapid radar revisits map flood extents and landslides through cloud cover.

– Infrastructure: subsidence and deformation tracking protect pipelines, railways, and dams.

Quality control remains essential. Calibrated ground truth, uncertainty maps, and versioned processing chains prevent false confidence when lives and costs are on the line. Ethical considerations also matter: privacy‑preserving aggregation, responsible sharing during crises, and careful handling of dual‑use capabilities. As instruments sharpen and revisit rates climb, the frontier shifts from collecting pixels to answering grounded questions—what changed, by how much, and what action should follow—turning imagery into decisions that are timely, traceable, and useful.

Conclusion

Across domains, recent advances are less about spectacle and more about usability: faster decisions at the edge, quantum‑enabled measurements, programmable biology, cleaner power, and actionable views of Earth. Readers who plan, build, or regulate systems can use the comparisons above to pick approaches that fit budgets, timelines, and risk tolerance. Progress compounds when teams measure carefully, design for constraints, and share standards—practical habits that turn discovery into durable value.