Key Technology Trends Shaping the Future

Outline:

– Artificial Intelligence and Automation: From Assistance to Co‑Creation

– Cloud to Edge Computing: Placing Workloads Where They Perform

– Connected Things and Digital Twins: Turning Physical Signals into Decisions

– Security and Privacy: Building Trust into Every Layer

– Strategy and Skills for the Next Decade: A Pragmatic Roadmap and Conclusion

Introduction:

Technology is both a toolkit and a compass. It reshapes the economy, work, and daily life not in sweeping movie-style leaps, but through steady, measurable gains that add up. The themes shaping the next decade—intelligent software, right‑sized compute, connected sensing, resilient security, and sustainable operations—are converging into systems that are more adaptive and accountable. For decision-makers, the relevance is immediate: these trends direct budgets, redefine roles, and decide who ships value faster at lower risk. For practitioners, they surface new design patterns, data habits, and ethical guardrails. And for curious readers, they offer a grounded view of what matters now, minus the noise.

Artificial Intelligence and Automation: From Assistance to Co‑Creation

Artificial intelligence is moving from narrow task aid to broad collaboration, not as a silver bullet but as a dependable multiplier when paired with clear objectives and quality data. In practical trials across content drafting, analytics, and software delivery, teams commonly report time savings in the range of 20–40% on well-scoped tasks, with the largest gains appearing in summarization, pattern detection, and test generation. Yet the story is not just speed. Accuracy improves when models act as a second set of eyes, catching outliers or offering alternative phrasing that human reviewers refine. The limits are equally real: models still invent facts under uncertainty, amplify biased inputs, and degrade when prompts or training data are ambiguous.

Value emerges fastest when organizations treat AI like a capable colleague who never sleeps but always needs unambiguous instructions. That means defining what “good” looks like, codifying validation steps, and designing feedback loops so the system learns from outcomes rather than one-off prompts. It also requires better data hygiene. Dirty, duplicated, or drifting data will flood automation with noise, eroding trust. Lightweight governance—think versioned prompts, labeled datasets, and purpose-built evaluation sets—pays off quickly.

Practical ways to deploy AI with discipline include:

– Start with narrow, measurable use cases such as summarizing support tickets, tagging documents, or generating unit tests.

– Pair every automated step with a verifiable check, such as schema validation, statistical thresholds, or human approval for high-impact outputs.

– Track impact with simple metrics: cycle time reduction, rework rate, and error severity distribution before and after augmentation.

– Treat prompts and guardrails as code, review them, and roll back when performance drifts.

Comparing approaches clarifies trade-offs. Heavier models can capture nuance but may be costly and slower to serve, while compact models are nimble and easier to deploy at the edge or inside a browser. Retrieval-augmented designs improve grounding by pulling from audited sources, but require careful indexing and access control. Fine-tuning can raise domain accuracy, though it increases lifecycle management overhead. The throughline is pragmatic: align model choice with latency, privacy, and cost targets; instrument results; and keep a human in the loop where consequences are significant.

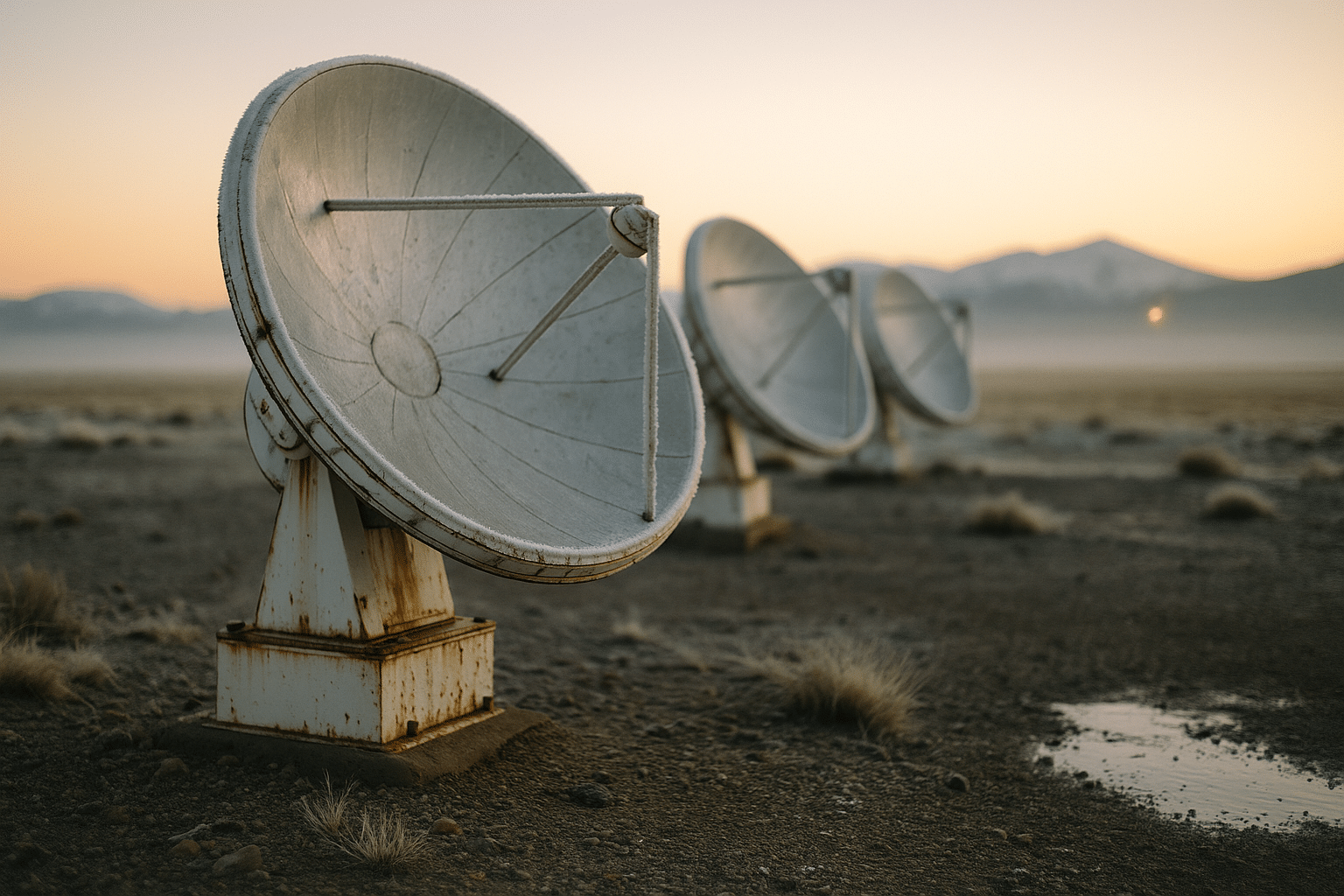

Cloud to Edge Computing: Placing Workloads Where They Perform

Compute is no longer a single place. It is a spectrum that stretches from hyperscale clusters to compact devices inches from the data source. Placing workloads along this spectrum is an architectural decision with concrete operational and financial effects. Centralized platforms shine for elastic analytics, archival storage, and large-scale training, where pooled capacity and managed services compress time to value. At the other end, near‑real‑time control, privacy-sensitive inference, and bandwidth-limited scenarios benefit from processing near the source, cutting round‑trip latency from hundreds of milliseconds to single digits and reducing egress costs.

Choosing the right mix starts with workload mapping rather than tool selection. A simple playbook helps:

– When latency, intermittent connectivity, or data sovereignty dominate, prioritize edge nodes with local caching and periodic synchronization.

– When demand is spiky and parallelizable, lean on elastic platforms and autoscaling policies with clear cost ceilings.

– For steady, predictable throughput, evaluate reserved capacity or on‑prem clusters where utilization can be kept high.

Data gravity matters. Moving terabytes daily just to run a model is rarely efficient; bringing the model to the data often is. Lightweight containerization and standardized interfaces make it feasible to deploy the same service across environments while keeping observability, secrets, and policy consistent. A well-instrumented pipeline should report latency percentiles, error budgets, and per-call cost in plain language that product leaders can act on.

Resilience and security travel with the workload. Edge nodes need signed artifacts, measured boot, and tamper-evident logs to operate in less-controlled settings. Central platforms require network segmentation, rate limiting, and isolation to prevent noisy neighbors from cascading failures. In both cases, blue‑green or canary deployments reduce risk during upgrades, while immutable build pipelines ensure the running code matches what was reviewed. The result is less about one location winning and more about a portfolio approach where each tier earns its keep through measurable service quality and total cost of ownership.

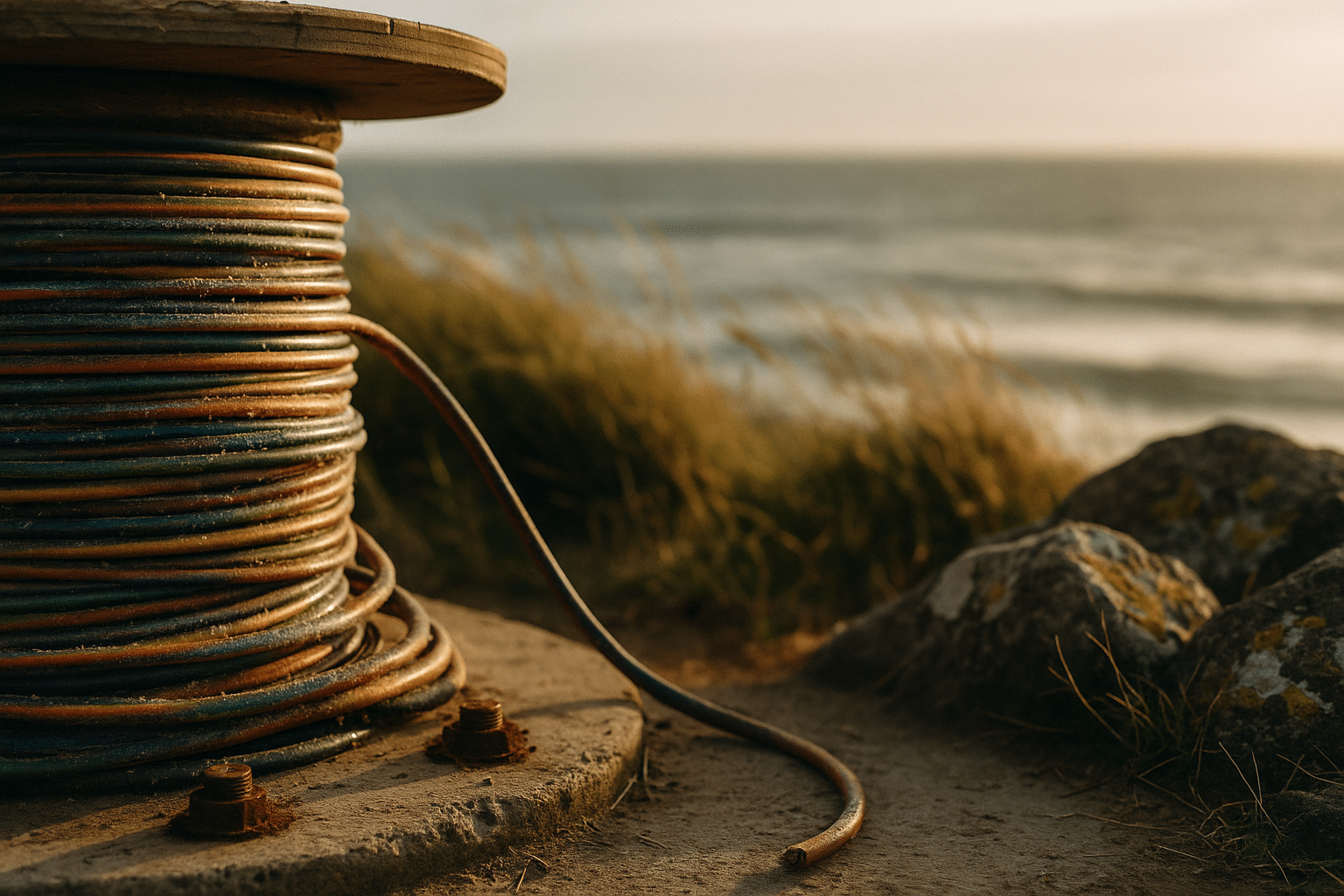

Connected Things and Digital Twins: Turning Physical Signals into Decisions

Sensors are quietly becoming the nervous system of the physical world. From factory lines to farms and city grids, low-power devices stream temperature, vibration, voltage, location, and countless other signals. A modest fleet of 10,000 sensors sampling once per second generates 864 million data points per day; unfiltered, that is overwhelming. The shift from raw feeds to event-driven, quality-tagged streams—where anomalies, thresholds, and context are computed near the source—transforms that firehose into information a team can trust.

Digital twins—living models that mirror assets or processes—translate those signals into shared understanding. Instead of poring over disparate charts, a twin exposes state, constraints, and forecasts in one place. In maintenance, that might mean scoring bearing health from vibration signatures and scheduling service ahead of failure. In energy, it could be optimizing load balance by simulating how weather, demand, and storage interact hour by hour. In logistics, route planning improves when a twin weighs traffic, temperature control, and delivery windows in real time.

Design choices distinguish robust systems from brittle ones:

– Prefer schema evolution and versioned contracts over ad-hoc payloads to avoid breaking downstream consumers.

– Use tiered storage—hot for recent, warm for aggregated, cold for compliance—to balance speed and cost.

– Calibrate sampling rates to the physics of the asset; over-sampling wastes power and budget, under-sampling misses faults.

– Attach provenance and quality scores so analytics can exclude dubious readings without human triage.

Privacy and safety are first-class requirements. Location traces, usage patterns, and environmental readings can be sensitive when linked, even if individual fields seem harmless. Edge filtering, on-device anonymization, and clear retention policies reduce exposure. Reliability demands redundancy and graceful degradation; if a link drops, local control should continue and synchronize later. Finally, resist chasing dashboards for their own sake. The highest returns come when sensing closes a loop—detect, decide, act—and when success is measured in fewer outages, less waste, and faster cycle times rather than screenfuls of metrics.

Security and Privacy: Building Trust into Every Layer

As systems interconnect, the attack surface expands in ways that are easy to underestimate. The prevailing mindset is to assume breach: design as if an adversary already has a foothold, and limit what they can do. That starts with identity. Strong, phishing-resistant authentication that binds access to a device and a user slashes account takeover risk. Least-privilege authorization trims blast radius by ensuring tokens, roles, and service accounts can touch only what they must. Network controls then act as tripwires and circuit breakers rather than the primary defense.

Resilience is as much about recovery as prevention. Immutable backups tested against realistic, time-bound objectives are the difference between a tense day and a lost week when ransomware strikes. Observability that stitches together logs, traces, and metrics shortens detection and response. Clear runbooks transform 2 a.m. confusion into coordinated action, and segmentation prevents an incident in one system from becoming a business-wide outage.

Practical steps that deliver outsized gains include:

– Enforce multi-factor sign-in with device checks for all administrative and remote access.

– Rotate secrets automatically; prefer short‑lived credentials over long‑lived keys.

– Patch on a fixed cadence, with expedited lanes for actively exploited flaws.

– Maintain a software bill of materials and scan dependencies to catch known issues early.

– Implement rate limits and anomaly alerts on authentication, data export, and payment endpoints.

Privacy is not just a checkbox. Collect only what you can protect, explain why you need it, and give users meaningful control. Techniques such as minimization, pseudonymization, and aggregation reduce risk without erasing insight. Data retention should be intentional: keep information long enough to serve users and meet obligations, then delete it. Finally, align incentives so teams are rewarded for reducing risk, not for adding features without safeguards. Trust is a competitive asset; it is earned through steady, verifiable practices more than bold claims.

Strategy and Skills for the Next Decade: A Pragmatic Roadmap and Conclusion

Technology strategies succeed when they are tied to outcomes that matter and expressed in language everyone can use. Instead of a shopping list of tools, define a small set of business capabilities—faster fulfillment, safer operations, smarter personalization—and map enabling components to each. A portfolio approach prevents over-investment in any single idea and encourages small, reversible bets. Think of it as gardening: plant many seeds, measure growth, prune what struggles, and double down on what thrives.

A workable roadmap favors quarters over years. In the first 90 days, focus on:

– Establishing shared metrics, such as lead time for changes, defect escape rate, and cost per transaction.

– Standing up a thin platform with secure identity, logging, and automated delivery so teams can ship safely.

– Piloting one AI‑assisted workflow, one edge deployment, and one data quality improvement to prove cross-cutting value.

From there, institutionalize learning. Create lightweight guilds where practitioners share patterns, sample prompts, data schemas, and incident retrospectives. Encourage a skill lattice rather than narrow ladders—engineers who can reason about data, analysts who can script, designers who understand privacy, operators who read model telemetry. Offer hands-on labs over slide decks, and measure skill adoption through real deliverables, not course completions.

Responsible technology is non-negotiable. Build ethics reviews into product checkpoints, not as a final gate. Ask who benefits, who is burdened, and how mistakes will be caught early. Simulate failure modes—model drift, sensor spoofing, credential leaks—before they happen. Sustainability belongs here too: optimize energy use, extend hardware life cycles, and prefer architectures that do more with less. Independent analyses estimate that large computing facilities consume a measurable slice of global electricity; efficiency work is both prudent and planet-friendly.

Conclusion for readers: If you lead a team, align investments to a few capabilities and track them relentlessly. If you build systems, favor simple, observable designs you can explain on a whiteboard. If you are exploring careers, cultivate data literacy, system thinking, and clear writing alongside your technical chops. The trends in this guide reward steady execution over grand gestures. Start small, instrument everything, and let evidence steer your next step. The future is not a cliff to leap from; it is a path you pave, one practical choice at a time.