Exploring Technology: Integration of technology in educational processes.

Outline and Why Technology Integration Matters Now

– Section 1 maps the journey and explains why integration, not mere adoption, is the goal.

– Section 2 focuses on pedagogy-first design, outlining routines that work across classroom and online spaces.

– Section 3 examines assessment, feedback loops, and responsible data use.

– Section 4 tackles equity, infrastructure, accessibility, and sustainable implementation.

– Section 5 closes with a practical roadmap for educators and leaders to move from pilot to scale.

Technology has become a structural layer of schooling rather than a novelty add‑on. When integrated thoughtfully, it supports clearer learning goals, diversified practice, and timely feedback. When bolted on, it can add noise, fragment attention, and burden teachers with extra clicks. The difference lies in integration: aligning tools with curriculum, assessment, and community context. This matters now because expectations on schools have shifted. Learners need to navigate information-rich environments, collaborate across distance and time zones, and demonstrate mastery through varied media. Sudden disruptions—from weather closures to health emergencies—also revealed the need for resilient learning ecosystems that can flex between classroom and home without sacrificing momentum.

Integration is not about amassing features; it is about solving authentic instructional problems. Ask: What misconception are students struggling with? How will they practice until fluent? What evidence will show progress? Only then should you decide whether a digital resource earns a place in the lesson. Consider a typical scenario: a teacher wants students to synthesize sources in a history unit. A low‑tech path might use printed excerpts and color‑coded annotations. A tech‑infused path might add asynchronous discussion with timestamped prompts and a collaborative brief. Both can work; the integrated plan chooses the combination that clarifies thinking, saves time, and preserves equity. Measured carefully, integrated approaches often show gains in engagement indicators (on‑task time, completion rates) and steadier retention across weeks, especially when practice is spaced and feedback loops are short. The sections that follow move from principles to routines you can use tomorrow.

Pedagogy‑First Design: Methods that Travel Well from Classroom to Cloud

Start with learning science that predates any device. Retrieval practice strengthens memory through low‑stakes recall; spacing distributes review across days to prevent cramming; dual coding pairs words with visuals to deepen understanding; interleaving mixes problem types to improve transfer. Technology can make these patterns easier to orchestrate at scale, but the pedagogy comes first. A practical weekly arc might look like this: launch with a brief diagnostic, teach in short segments, embed two or three checks for understanding, assign targeted practice with increasing independence, and close with a reflection that surfaces what to revisit next week.

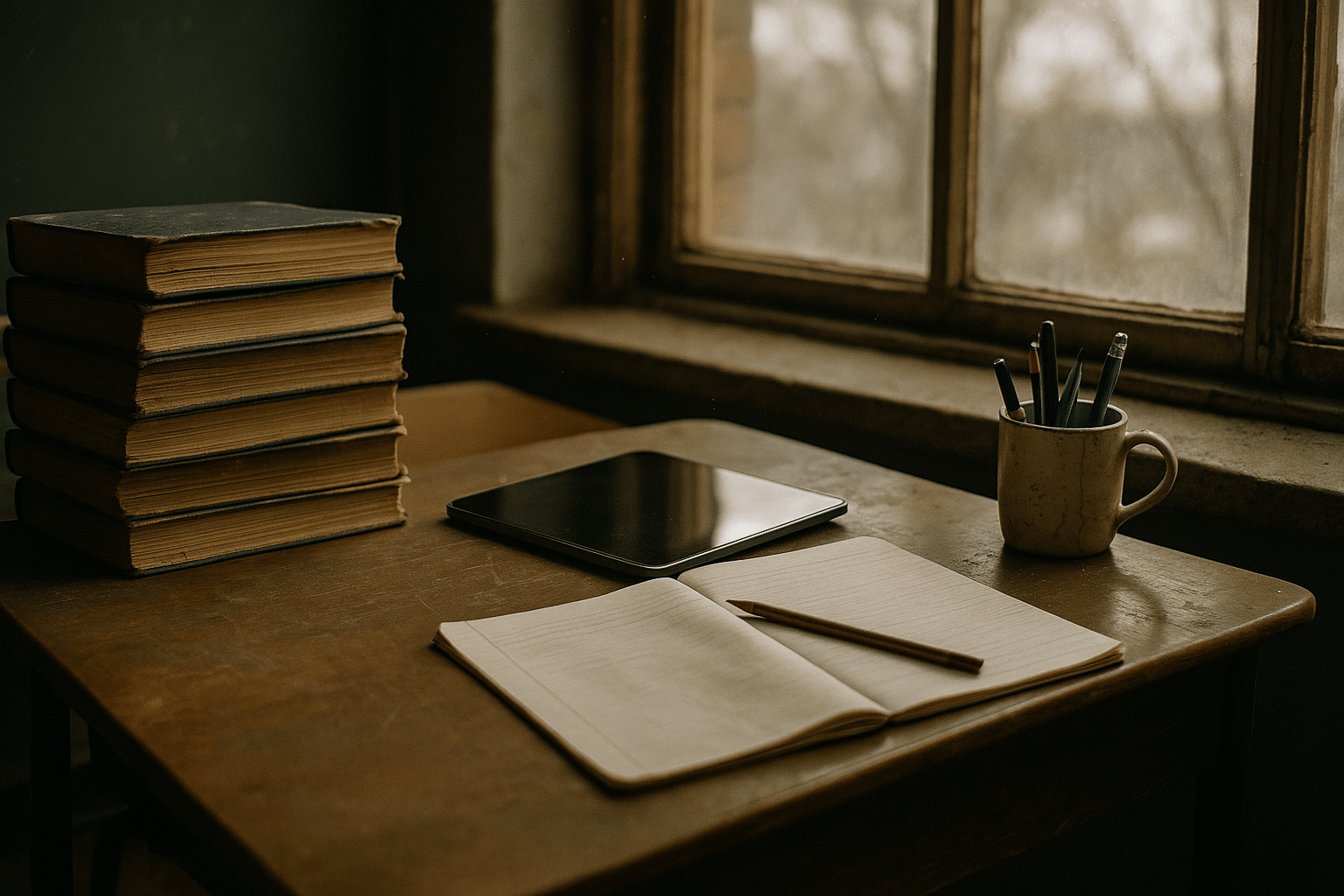

Comparisons help clarify choices. Synchronous sessions can energize discussion and allow real‑time coaching, yet they demand attention to pacing and bandwidth. Asynchronous work supports flexible schedules, well‑crafted prompts, and thoughtful replies, but requires explicit scaffolds and clear rubrics to prevent drift. In‑person whiteboards invite rapid iteration and social cueing; digital whiteboards preserve work, permit replays, and make quiet voices more visible. Paper notebooks offer distraction‑free focus and embodied memory through handwriting; digital notebooks improve searchability, layering of media, and remote collaboration. A strong plan often blends these modes: introduce a concept with a concise mini‑lesson, shift to small‑group problem solving (in room or online), intersperse 2–3 minute recall prompts, and close with a student‑generated summary.

To keep cognitive load manageable, chunk activities and avoid feature sprawl. Practical routines:

– Use micro‑explanations (5–7 minutes) followed by a 3‑question check.

– Offer worked examples, then faded examples, then independent problems.

– Provide choice among two or three practice pathways aligned to the same standard.

– Schedule spaced review two to four days after initial exposure.

These patterns translate easily across subjects. In literacy, students might annotate a passage, record a 60‑second paraphrase, and compare interpretations. In mathematics, they might analyze a solution, identify the step that removes an error, and generate an alternate method. In science, they could predict an outcome, run a simple simulation or hands‑on test, and reconcile results in a short claim‑evidence‑reasoning write‑up. Research over decades has associated such structures with higher retention and transfer; digital layers simply help automate distribution, capture artifacts, and maintain momentum when learners are not co‑located.

Assessment, Feedback, and Analytics without Overreach

Assessment should inform teaching, not overshadow it. Formative checks—brief, frequent, and targeted—are particularly powerful. Think in terms of minutes, not hours: a two‑minute exit prompt, a five‑item retrieval quiz, a short reflection with one sentence starter. These micro‑assessments surface misconceptions before they calcify and help teachers adjust grouping, pacing, or examples in the next lesson. Summative assessments still matter, but technology allows a richer mix of evidence: labs documented through photos and observations, portfolios that show drafts over time, oral defenses recorded for moderation, and performance tasks anchored in community issues.

Feedback operates on a spectrum: immediate correctness checks reduce guesswork, while process‑focused comments build strategies for the next attempt. A simple cadence works across formats:

– Acknowledge what is correct or on the right track.

– Point to a specific next step, not a vague directive.

– Invite a brief revision window to close the loop.

Short audio or video notes can humanize feedback; structured comment banks can speed consistency; exemplars with annotations make expectations visible. The key is to maintain a fast feedback cycle—ideally within 24–48 hours for most assignments—so students can act while the task is still fresh. In practice, teachers often report that a steady stream of small corrections yields steadier gains than a few large grading events.

Analytics can illuminate patterns, but they warrant a careful hand. Useful signals include longitudinal growth on key standards, item‑level misconceptions that reappear across units, and participation trends that correlate with timely submissions. Less useful are vanity metrics that reward time‑on‑screen without learning. Responsible use involves transparent criteria, student access to their own progress views, and privacy protections that minimize data collection to educational need. When schools publish clear data practices, families build trust, and teachers feel safer experimenting with new formats. A balanced approach looks like this: set two or three measurable targets per unit, collect just enough evidence to judge progress, and meet briefly in teams to interpret results and tune routines. Over time, you can expect steadier mastery curves, narrower variability between groups, and fewer surprises at major checkpoints.

Equity, Access, and Sustainable Infrastructure

Equity is both access and design. Devices and connectivity matter, but so do materials that honor diverse learners and constraints. Plan for bandwidth tiers: at high bandwidth, use rich media and live collaboration; at moderate bandwidth, emphasize compressed videos, images, and asynchronous discussion; at low bandwidth, lean on lightweight pages, downloadable packets, and SMS‑style prompts. Where connectivity is intermittent, provide offline‑capable content and clear sync routines. Printed companions remain valuable, especially for extended reading, math practice, and lab instructions that do not require screens to be effective.

Accessibility is a baseline, not an afterthought. Use clear headings, plain language, descriptive alt concepts when images are discussed, and high‑contrast visuals. Offer captions and transcripts for media; ensure that color is not the only cue that conveys meaning; provide adjustable playback speed and text size. Multiple means of engagement, representation, and expression give students options without lowering expectations. Translation supports and glossaries help multilingual learners participate fully. For students with limited quiet space at home, design tasks that can be chunked and paused, and avoid rigid attendance requirements tied only to live sessions.

Infrastructure choices should be sustainable. Consider device models (shared carts, one‑to‑one, or bring‑your‑own), charging logistics, protective cases, and repair cycles. Inventory and content management reduce setup friction and lost time. Security policies should be age‑appropriate and transparent, with clear consequences and restorative practices. Professional learning needs time and sequencing: start with two or three core workflows (planning, feedback, and small‑group differentiation), then expand. Budgeting benefits from total‑cost thinking: hardware, maintenance, connectivity, training, accessibility tooling, and replacement reserves. Implementation checklists can help:

– Map core courses to specific digital and analog routines.

– Identify offline alternatives for each essential activity.

– Schedule family orientation that explains expectations and support options.

– Track a small set of equity indicators (device reliability, home access, assignment return rates).

When equity, accessibility, and infrastructure align, technology becomes a bridge rather than a barrier.

Conclusion and Action Steps for Educators and Leaders

Integration is a team sport. Whether you teach a single class or guide a district, the moves are similar: define the learning problem, pick a lean set of tools that specifically address it, and measure what changes for students and teachers. The payoff is not in novelty, but in smoother routines, clearer evidence, and more time for feedback and relationships. A cautious optimism is healthy: pilots before purchase, iteration before scale, and reflection before the next initiative. The following roadmap turns principles into action.

Phase 1 — Discover and define:

– Run a short needs scan with teachers and students; surface top three friction points.

– Select two priority standards per course where improved feedback or practice would matter most.

– Map analog equivalents so every digital activity has a workable backup.

Phase 2 — Pilot and measure:

– Launch a 4–6 week pilot in a few classes; keep scope narrow and goals explicit.

– Track a small metric set: completion rates, on‑task indicators, quick checks of understanding, and student sentiment.

– Hold weekly debriefs to adjust prompts, timing, and supports; document what to keep and what to drop.

Phase 3 — Expand and support:

– Build a common playbook of routines (diagnostics, retrieval cycles, feedback cadence).

– Offer coaching cycles and model classrooms—physical or virtual—where peers can observe workflows.

– Establish an accessibility review before units go live and a privacy check for any data collection.

Phase 4 — Sustain and improve:

– Refresh training at semester points; retire rarely used features to reduce clutter.

– Share brief case notes highlighting challenges and solutions, not just success stories.

– Revisit equity indicators and adjust infrastructure, communications, or schedules accordingly.

For educators, the invitation is to start small yet purposeful: one routine tightened, one feedback loop shortened, one barrier removed. For leaders, the charge is to protect time for collaboration, resource what works, and stay transparent about data and decisions. Done this way, technology becomes the quiet architecture of learning—supportive, adaptable, and focused squarely on growth.